I built a ChatGPT plugin to answer questions about data hosted in Datasette

Plus: Don't trust AI to talk accurately about itself

In this newsletter:

I built a ChatGPT plugin to answer questions about data hosted in Datasette

Don't trust AI to talk accurately about itself: Bard wasn't trained on Gmail

Weeknotes: AI won't slow down, a new newsletter and a huge Datasette refactor

Plus 12 links and 4 quotations

I built a ChatGPT plugin to answer questions about data hosted in Datasette - 2023-03-24

Yesterday OpenAI announced support for ChatGPT plugins. It's now possible to teach ChatGPT how to make calls out to external APIs and use the responses to help generate further answers in the current conversation.

My open source project Datasette makes it easy to host data online, and already provides a JSON API for executing SQLite queries.

I know from past experiments that GPT3/4 are able to construct SQLite-compatible SQL queries. I figured that Datasette and ChatGPT would be a perfect combination.

Datasette itself has a plugin system which can be used to add new features, including the new URL routes needed by the ChatGPT plugin specification.

So I built datasette-chatgpt-plugin - a Datasette plugin that turns a Datasette instance into a ChatGPT plugin, and allows you to ask questions of your own data directly from ChatGPT.

datasette-chatgpt-plugin

The new plugin can be installed using datasette install datasette-chatgpt-plugin. It's only useful if it's deployed online though, since ChatGPT needs to be able to access it.

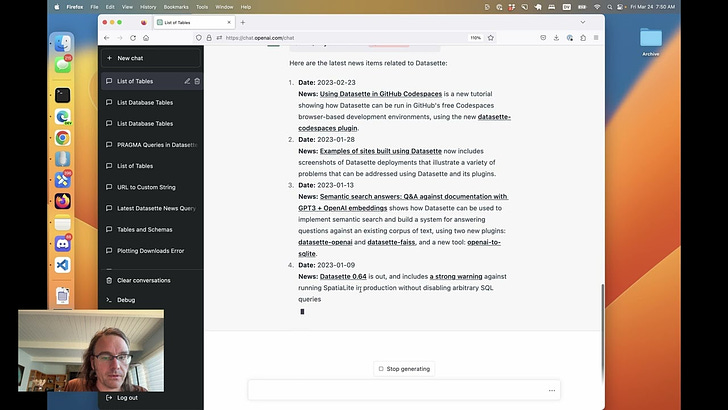

I'm running it on the official datasette.io website - which is itself a customized instance of Datasette. The plugin provides access to the datasette.io/content database, which has tables for things like Datasette news, releases and available plugins.

Here's a video demo showing some of the things ChatGPT can do with the plugin installed.

I've only just got this working, but here's a query that illustrates how effective it can be. I asked:

What are the most popular plugins?

It gave me back the following:

Clicking the little arrow next to "Used query datasette.io" reveals the API call it made, exposing the SQL query it generated:

The API call it made was:

{

"sql": "SELECT name, full_name, stargazers_count FROM plugins ORDER BY stargazers_count DESC LIMIT 5",

"_shape": "array"

}You can try that query in Datasette here - or here's the JSON version.

Here's the JSON that was returned:

[

{

"name": "datasette-dashboards",

"full_name": "rclement/datasette-dashboards",

"stargazers_count": 84

},

{

"name": "dogsheep-beta",

"full_name": "dogsheep/dogsheep-beta",

"stargazers_count": 78

},

{

"name": "datasette-graphql",

"full_name": "simonw/datasette-graphql",

"stargazers_count": 75

},

{

"name": "datasette-ripgrep",

"full_name": "simonw/datasette-ripgrep",

"stargazers_count": 63

},

{

"name": "datasette-scraper",

"full_name": "cldellow/datasette-scraper",

"stargazers_count": 44

}

]ChatGPT turns the JSON into a nice human-readable reply. It also knows how to generate thumbnails from og:image metadata, adding a gallery of those to the end of the reply.

How the plugin works

Building ChatGPT plugins, like so much involving Large Language Models, is both really easy and deceptively complicated.

You give ChatGPT a short, human-ish language description of your plugin and how to use it, and a machine-readable OpenAPI schema with the details of the API.

And that's it! The language model figures out everything else.

Datasette exposes a JSON API that speaks SQL. ChatGPT knows SQL already, so all my prompt needed to do was give it some hints - in particular tell it to use the SQLite dialect.

Here's the prompt I'm using at the moment:

Run SQLite queries against a database hosted by Datasette. Datasette supports most SQLite syntax but does not support PRAGMA statements. Use

select group_concat(sql, ';') from sqlite_masterto see the list of tables and their columns Useselect sql from sqlite_master where name = 'table_name'to see the schema for a table, including its columns. Instead ofPRAGMA table_info(table_name)useselect * from pragma_table_info('table_name'). PRAGMA statements are not allowed.select * from pragma_table_info('table_name')is allowed.

In my early experiments it kept trying to run PRAGMA table_info(), hence my increasingly frustrated prompts about that!

With hindsight, I don't think it was re-fetching my prompt while I was developing the plugin, so those repeated warnings probably aren't needed.

Your application needs to serve two additional pages - a plugin description at /.well-known/ai-plugin.json and an OpenAI schema linked to by that description.

You can see those two pages for my datasette.io deployment here:

The ai-plugin.json file currently looks like this:

{

"schema_version": "v1",

"name_for_model": "datasette_datasette_io_3c330f",

"name_for_human": "Query datasette.io",

"description_for_model": "Run SQLite queries against a database hosted by Datasette.\nDatasette supports most SQLite syntax but does not support PRAGMA statements.\nUse `select group_concat(sql, ';') from sqlite_master` to see the list of tables and their columns\nUse `select sql from sqlite_master where name = 'table_name'` to see the schema for a table, including its columns.\nInstead of `PRAGMA table_info(table_name)` use `select * from pragma_table_info('table_name')`\nPRAGMA statements are not allowed. `select * from pragma_table_info('table_name') is allowed.",

"description_for_human": "Run SQL against data in Datasette.",

"auth": {

"type": "none"

},

"api": {

"type": "openapi",

"url": "https://datasette.io/-/chatgpt-openapi-schema.yml",

"has_user_authentication": false

},

"logo_url": "https://avatars.githubusercontent.com/u/126964132?s=400&u=08b2ed680144a4feb421308f09e5f3cc5876211a&v=4",

"contact_email": "hello@contact.com",

"legal_info_url": "hello@legal.com"

}And the chatgpt-openapi-schema.yml file contains this:

openapi: 3.0.1

info:

title: Datasette API

description: Execute SQL queries against a Datasette database and return the results as JSON

version: 'v1'

servers:

- url: https://datasette.io

paths:

/content.json:

get:

operationId: query

summary: Execute a SQLite SQL query against the content database

description: Accepts SQLite SQL query, returns JSON. Does not allow PRAGMA statements.

parameters:

- name: sql

in: query

description: The SQL query to be executed

required: true

schema:

type: string

- name: _shape

in: query

description: The shape of the response data. Must be "array"

required: true

schema:

type: string

enum:

- array

responses:

'200':

description: Successful SQL results

content:

application/json:

schema:

type: array

items:

type: object

'400':

description: Bad request

'500':

description: Internal server errorI haven't actually used OpenAPI schemas before... so I got ChatGPT to write the initial version for me, using the following prompt:

Write an OpenAPI schema explaining the https://latest.datasette.io/fixtures.json?sql=select+*+from+facetable&_shape=array GET API which accepts SQL and returns an array of JSON objects

For a detailed account of how I built the plugin, take a look at my notes in issue #1 in the repository.

I prototyped the initial plugin using Glitch, because that's the fastest way I know to get a live-on-the-web application which constantly reflects new changes to the code. This made iterating much faster... on the OpenAPI schema at least. As far as I can tell ChatGPT only loads that ai-plugin.json file once, which is frustrating because it means you have to deploy a new copy of the application to get it to re-read that crucial prompt.

I ended up doing most of my prompt engineering in ChatGPT itself though - I could tell it "Instead of PRAGMA table_info(table_name) use select * from pragma_table_info('table_name')" and then re-try my previous question to see if the new instruction fixed any problems I was having.

The bad news: it can hallucinate

Here's the bad news. I've been playing with this for only a short time, so I'm still exploring its abilities. I've already had a couple of instances of it hallucinating answers despite having looked them up in the database first.

I'm hoping I can address this somewhat with further prompt engineering - "only use information returned from the query to answer the question" kind of stuff. But I can't guarantee I'll be able to suppress this entirely, which for a database querying tool is an extremely serious problem.

More about this, including some examples, in issue #2 in the repo.

Want to try this with your own data?

The ChatGPT plugin system isn't available outside of the preview yet, but when it is I'll be adding this functionality to my Datasette Cloud SaaS platform, for people who don't want to install and run Datasette themselves.

You can sign up for the Datasette Cloud preview here if you'd like to learn more.

Previous experiments

I've experimented with variants of this pattern myself before: it turns out it's surprisingly easy to enhance the capabilities of a large language model by providing it access to additional tools. Here's some previous work:

How to implement Q&A against your documentation with GPT3, embeddings and Datasette describes a pattern of searching an existing corpus for relevant information and appending that to the prompt in order to answer a user's question.

A simple Python implementation of the ReAct pattern for LLMs shows a more advanced pattern, similar to the new ChatGPT plugins mechanism, where multiple tools can be registered with the model and used to generate responses. It's based on the paper ReAct: Synergizing Reasoning and Acting in Language Models.

Quote 2023-03-21

Was on a plane yesterday, studying some physics; got confused about something and I was able to solve my problem by just asking alpaca-13B—running locally on my machine—for an explanation. Felt straight-up spooky.

Link 2023-03-21 OpenAI to discontinue support for the Codex API: OpenAI shutting off access to their Codex model - a GPT3 variant fine-tuned for code related tasks, but that was being used for all sorts of other purposes - partly because it had been in a beta phase for over a year where OpenAI didn't charge anything for it. This feels to me like a major strategic misstep for OpenAI: they're only giving three days notice, which is shaking people's confidence in them as a stable platform for building on at the very moment when competition from other vendors (and open source alternatives) is heating up.

Link 2023-03-21 Adobe made an AI image generator — and says it didn’t steal artists’ work to do it: Adobe Firefly is a brand new text-to-image model which Adobe claim was trained entirely on fully licensed imagery - either out of copyright, specially licensed or part of the existing Adobe Stock library. I'm sure they have the license, but I still wouldn't be surprised to hear complaints from artists who licensed their content to Adobe Stock who didn't anticipate it being used for model training.

Link 2023-03-21 Bing Image Creator comes to the new Bing: Bing Chat is integrating DALL-E directly into their interface, giving it the ability to generate images when prompted to do so.

Link 2023-03-21 Prompt Engineering: Extremely detailed introduction to the field of prompt engineering by Lilian Weng, who leads applied research at OpenAI.

Link 2023-03-21 Google Bard is now live: Google Bard launched today. There's a waiting list, but I made it through within a few hours of signing up, as did other people I've talked to. It's similar to ChatGPT and Bing - it's the same chat interface, and it can clearly run searches under the hood (though unlike Bing it doesn't tell you what it's looking for).

Quote 2023-03-21

Here are some absurdly expensive things you can do on a trip to Tokyo: Buy a golden toilet. There is a toilet in Tokyo that is made of gold and costs around 10 million yen. If you are looking for a truly absurd experience, you can buy this toilet and use it for your next bowel movement. [...]

Link 2023-03-21 The Age of AI has begun: Bill Gates calls GPT-class large language models "the most important advance in technology since the graphical user interface". His essay here focuses on the philanthropy angle, mostly from the point of view of AI applications in healthcare, education and concerns about keeping access to these new technologies as equitable as possible.

Quote 2023-03-22

GPT-4, like GPT-3 before it, has a capability overhang; at the time of release, neither OpenAI or its various deployment partners have a clue as to the true extent of GPT-4's capability surface - that's something that we'll get to collectively discover in the coming years. This also means we don't know the full extent of plausible misuses or harms.

Don't trust AI to talk accurately about itself: Bard wasn't trained on Gmail - 2023-03-22

Earlier this month I wrote about how ChatGPT can’t access the internet, even though it really looks like it can. Consider this part two in the series. Here's another common and non-intuitive mistake people make when interacting with large language model AI systems: asking them questions about themselves.

(When I say "large language model AI systems" I mean tools like ChatGPT, Bing and the brand new Google Bard.)

Asking questions like this feels like a natural thing to do: these bots use "I" pronouns (I really wish they wouldn't) and will very happily answer questions about themselves - what they can do, how they work, even their own opinions (I really wish they wouldn't do that).

These questions are likely to produce realistic but misleading answers. They come at a surprisingly high risk for hallucination.

What harm could possibly come from asking them how they work?

No, Bard was not trained on text from private Gmail accounts

The following screenshot started circulating on Twitter earlier today. I'm not linking to it directly because I don't want to encourage it to spread further without extra context.

The user asked:

where does Bard's dataset come from?

Bard replied like this - emphasis mine:

Bard's dataset comes from a variety of sources, including:

Publicly available datasets: These include datasets of text and code from the web, such as Wikipedia, GitHub, and Stack Overflow.

Google's internal data: This includes data from Google Search, Gmail, and other products.

Data from third-party companies: This includes data from companies that have partnered with Google to provide data for Bard's training.

The specific sources of Bard's dataset are not publicly available, but Google Al has said that the dataset is "as comprehensive as possible".

Unsurprisingly, the idea that Bard might have been trained on internal data from Gmail sparked a lot of concern!

Here's an official denial:

Bard is an early experiment based on Large Language Models and will make mistakes. It is not trained on Gmail data. -JQ

- Google Workspace (@GoogleWorkspace) March 21, 2023

(I have some supporting arguments below for if the official denial isn't convincing enough for you.)

Bard was not trained on Gmail. So why on earth did Bard say that it was?

Language models have no concept of "self"

As always with language models, the trick to understanding why they sometimes produce wildly inappropriate output like this is to think about how they work.

A large language model is a statistical next-word / next-sentence predictor. Given the previous sequence of words (including the user's prompt), it uses patterns from the vast amount of data it has been trained on to find a statistically satisfying way to continue that text.

As such, there's no mechanism inside a language model to help it identify that questions of the form "how do you work?" should be treated any differently than any other question.

We can give it hints: many chatbot models are pre-seeded with a short prompt that says something along the lines of "You are Assistant, a large language model trained by OpenAI" (seen via a prompt leak).

And given those hints, it can at least start a conversation about itself when encouraged to do so.

But as with everything else language model, it's an illusion. It's not talking about itself, it's completing a sentence that starts with "I am a large language model trained by ...".

So when it outputs "Google's internal data:", the obvious next words might turn out to be "This includes data from Google Search, Gmail, and other products" - they're statistically likely to follow, even though they don't represent the actual truth.

This is one of the most unintuitive things about these models. The obvious question here is why: why would Bard lie and say it had been trained on Gmail when it hadn't?

It has no motivations to lie or tell the truth. It's just trying to complete a sentence in a satisfactory way.

What does "satisfactory" mean? It's likely been guided by RLHF - Reinforcement Learning from Human Feedback - which the ChatGPT development process has excelled at. Human annotators help train the model by labelling responses as satisfactory or not. Google apparently recruited the entire company to help with this back in February.

I'm beginning to suspect that the perceived difference in quality between different language model AIs is influenced much more heavily by this fine-tuning level of training than it is by the underlying model size and quality itself. The enormous improvements the Alpaca fine-tuning brought to the tiny LLaMA 7B model has reinforced my thinking around this.

I think Bard's fine-tuning still has a long way to go.

Current information about itself couldn't have been in the training data

By definition, the model's training data must have existed before the model itself was trained. Most models have a documented cut-off date on their training data - for OpenAI's models that's currently September 2021, I don't believe Google have shared the cut-off date for the LaMDA model used by Bard.

If it was trained on content written prior to its creation, it clearly can't understand details about its own specific "self".

ChatGPT can answer pretty detailed questions about GPT-3, because that model had been iterated on and written about publicly for several years prior to its training cut-off. But questions about its most recent model, by definition, cannot be answered just using data that existed in its training set.

But Bard can consult data beyond its training!

Here's where things get a bit tricky.

ChatGPT is a "pure" interface to a model: when you interact with it, you're interacting with the underlying language model directly.

Google Bard and Microsoft Bing are different: they both include the ability to consult additional sources of information, in the form of the Google and Bing search indexes.

Effectively, they're allowed to augment their training data with additional information fetched from a search.

This sounds more complex than it actually is: effectively they can run an external search, get back some results, paste them invisibly into the ongoing conversation and use that new text to help answer questions.

(I've built a very simple version of this pattern myself a couple of times, described in How to implement Q&A against your documentation with GPT3, embeddings and Datasette and A simple Python implementation of the ReAct pattern for LLMs.)

As such, one would hope that Bard could offer a perfect answer to any question about itself. It should be able to do something this:

User: Where does Bard's dataset come from?

Bard: (invisible): search Google for "Bard dataset"

Bard: (invisible): search results said: ... big chunk of text from the Google indexed documents ...

Bard: My underlying model LaMDA was trained on public dialog data and other public web documents.

Clearly it didn't do that in this case! Or if it did, it summarized the information it got back in a misleading way.

I expect Bard will have a much better answer for this question within a day or two - a great thing about running models with augmented data in this way is that you can improve their answers without having to train the underlying model again from scratch every time.

More reasons that LaMDA wouldn't be trained on Gmail

When I first saw the claim from that original screenshot, I was instantly suspicious.

Taking good care of the training data that goes into a language model is one of the most important and challenging tasks in all of modern AI research.

Using the right mix of content, with the right mix of perspectives, and languages, and exposure to vocabulary, is absolutely key.

If you train a model on bad sources of training data, you'll get a really badly behaved model.

The problem is that these models require far more text than any team of humans could ever manually review.

The LaMDA paper describes the training process like so:

LaMDA was pre-trained to predict the next token in a text corpus. Unlike previous dialog models trained on dialog data alone, we pre-trained LaMDA on a dataset created from public dialog data and other public web documents. Therefore, LaMDA can be used as a general language model prior to fine-tuning.

The pre-training dataset consists of 2.97B documents, 1.12B dialogs, and 13.39B dialog utterances, for a total of 1.56T words

1.56 trillion words!

Appendix E has more details:

The composition of the data is as follows: 50% dialogs data from public forums; 12.5% C4 data t5; 12.5% code documents from sites related to programming like Q&A sites, tutorials, etc; 12.5% Wikipedia (English); 6.25% English web documents; and 6.25% Non-English web documents.

"C4 data t5" I believe relates to Common Crawl.

So why not mix in Gmail too?

First, in order to analyze the training data you need to be able to have your research team view it - they need to run spot checks, and build and test filtering algorithms to keep the really vile stuff to a minimum.

At large tech companies like Google, the ability for members of staff to view private data held in trust for their users is very tightly controlled. It's not the kind of thing you want your machine learning training team to be poking around in... and if you work on those teams, even having the ability to access that kind of private data represents a substantial personal legal and moral risk.

Secondly, think about what could go wrong. What if a language model leaked details of someone's private lives in response to a prompt from some other user?

This would be a PR catastrophe. Would people continue to trust Gmail or other Google products if they thought their personal secrets were being exposed to anyone who asked Bard a question? Would Google ever want to risk finding out the answer to that question?

The temptations of conspiratorial thinking

Are you still not convinced? Are you still suspicious that Google trained Bard on Gmail, despite both their denials and my logic as to why they wouldn't ever want to do this?

Ask yourself how much you want to believe that this story is true.

This modern AI stuff is deeply weird, and more than a little frightening.

The companies involved are huge, secretive and are working on technology which serious people have grave concerns about.

It's so easy to fall into the trap of conspiratorial thinking around this stuff. Especially since some of the conspiracies might turn out to be true!

I don't know how to best counter this most human of reactions. My best recommendation is to keep in mind that humans, like language models, are pattern matching machines: we jump to conclusions, especially if they might reinforce our previous opinions and biases.

If we're going to figure this stuff out together, we have to learn when to trust our initial instincts and when to read deeper and think harder about what's going on.

Link 2023-03-22 Datasette: Gather feedback on new ?_extra= design: I just landed the single biggest backwards-incompatible change to Datasette ever, in preparation for the 1.0 release. It's a change to the default JSON format from the Datasette API - the new format is much slimmer, and can be expanded using a new ?_extra= query string parameter. I'm desperately keen on getting feedback on this change! This issues has more details and a call for feedback.

Weeknotes: AI won't slow down, a new newsletter and a huge Datasette refactor - 2023-03-22

I'm a few weeks behind on my weeknotes, but it's not through lack of attention to my blog. AI just keeps getting weirder and more interesting.

I'm beginning to expect that every Tuesday may be a write-off for the next few years, since the AI community seems to have decided that Tuesday is the day to launch everything.

Two Tuesdays ago we got a Google announcement, Anthropic's Claude and GPT-4. On Tuesday this week we got Google Bard, Bing Image Creator and Adobe Firefly.

I've written about a bunch of that stuff this month:

10th: ChatGPT can't access the internet, even though it really looks like it can

11th: Large language models are having their Stable Diffusion moment

13th: Stanford Alpaca, and the acceleration of on-device large language model development

17th: Could you train a ChatGPT-beating model for $85,000 and run it in a browser?

18th: A conversation about prompt engineering with CBC Day 6

22nd: Don't trust AI to talk accurately about itself: Bard wasn't trained on Gmail

Apparently this blog is now partly focused on AI! If you want to stay up-to-date with my writing on this (and other) subjects you can subscribe to my atom feed, or you can sign up for my brand new Substack newsletter.

My blog as a newsletter

I know there are a lot of people out there who don't habitually use a feed reader but do find great value from email newsletters.

simonw.substack.com is my new newsletter, which is effectively a way to subscribe to my blog via email.

I started it a few months ago when it looked like Twitter was about to collapse under the weight of its new mismanagement. I first promoted it at the bottom of my Large language models are having their Stable Diffusion moment post, and it's since grown to 640 subscribers!

I plan to send it out around once a week, provided there's material to send.

It will be mostly content from my blog, with maybe a paragraph or two of additional context added at the top highlighting themes of the past week (such as GPT-4).

The first two editions can be found here:

March 13th: The Stable Diffusion moment for Large Language Models

March 19th: Could you train a ChatGPT-beating model for $85,000 and run it in a browser?

A fun detail about my newsletter is how I'm generating it.

Substack doesn't have an API, but I wanted to automate as much of the process of copying in data from my blog as possible.

I built myself an automation around copy and paste!

observablehq.com/@simonw/blog-to-newsletter is an Observable notebook I wrote which assembles most of the newsletter for me.

It works by running a big SQL query against my datasette.simonwillison.net Datasette instance, which runs against a SQLite copy of my blog content (a PostgreSQL/Django app) built by a GitHub Action in this repository.

The SQL query assembles a string of HTML which is rendered in the notebook. There's also a "Copy to clipboard" button which uses this JavaScript pattern to copy a rich text representation of the HTML to the clipboard.

When I hit "paste" in the Substack editor interface it converts that representation into Substack's chosen subset of HTML. Then I can edit it by hand in the Substack editor.

This is working really well so far - it's really easy to tweak the generated HTML in the Observable notebook, and once I've transferred it to Substack I can re-arrange things and add my own extra commentary to the top of the newsletter before hitting send.

Datasette's new JSON API

I finally landed a GIANT branch I've been working on for several months now: a complete redesign of Datasette's default JSON format, one of the largest changes I need to land prior to releasing Datasette 1.0.

The previous default JSON format was a bit of a mess: it had dozens of keys, and presented the row data as an array of arrays (on the basis that the column names were available in a separate key, and rows as arrays would be more efficient in terms of bytes on the wire).

I always found myself adding ?_shape=array to that URL to get a smalle format, which strongly indicated that the default I had picked was the wrong one.

The new format can now be previewed here - it looks like this (truncated):

{

"ok": true,

"next": "d,v",

"rows": [

{

"pk1": "a",

"pk2": "a",

"content": "a-a"

},

{

"pk1": "a",

"pk2": "b",

"content": "a-b"

}

]

}The default keys are "ok", "next" to indicate pagination (this is null if there are no extra pages) and "rows" with a list of JSON objects.

If you want extra rows - like a total row count, or a list of columns, or some suggested facets - you can request them using the new ?_extra= parameter - for example:

This returns a response that starts like this:

{

"ok": true,

"next": "d,v",

"count": 201,

"columns": [

"pk1",

"pk2",

"content",

"sortable",

"sortable_with_nulls",

"sortable_with_nulls_2",

"text"

],

"suggested_facets": [

{

"name": "pk1",

"toggle_url": "https://latest.datasette.io/fixtures/sortable.json?_extra=columns&_extra=count&_extra=suggested_facets&_facet=pk1"

},

{

"name": "pk2",

"toggle_url": "https://latest.datasette.io/fixtures/sortable.json?_extra=columns&_extra=count&_extra=suggested_facets&_facet=pk2"

},

{

"name": "text",

"toggle_url": "https://latest.datasette.io/fixtures/sortable.json?_extra=columns&_extra=count&_extra=suggested_facets&_facet=text"

}

],

"rows": [There's still more work to do on this feature: I need to write the documentation for it, and figure out how it should affect the Datasette endpoint that returns results from an arbitrary SQL query. But it's ready to preview, and I'm keen to get feedback on it as quickly as possible!

Please take a look, and provide feedback on this dedicated issue thread - or come and talk about it in the Datasette Discord.

Releases these weeks

datasette-atom: 0.9 - (12 releases total) - 2023-03-14

Datasette plugin that adds a .atom output formatdatasette-simple-html: 0.2 - (2 releases total) - 2023-03-12

Datasette SQL functions for very simple HTML operationsapple-notes-to-sqlite: 0.1 - 2023-03-09

Export Apple Notes to SQLitedatasette-render-html: 1.0 - (4 releases total) - 2023-03-09

Plugin for selectively rendering the HTML is specific columnsdatasette: 0.64.2 - (125 releases total) - 2023-03-08

An open source multi-tool for exploring and publishing data

TIL these weeks

Running LLaMA 7B and 13B on a 64GB M2 MacBook Pro with llama.cpp

How to read Hacker News threads with most recent comments first

A simple Python implementation of the ReAct pattern for LLMs

Use DuckDB to convert parquet to JSON and then open it in Datasette Lite

Quote 2023-03-23

If you ask Microsoft’s Bing chatbot if Google’s Bard chatbot has been shut down, it says yes, citing as evidence a news article that discusses a tweet in which a user asked Bard when it would be shut down and Bard said it already had, itself citing a comment from Hacker News in which someone joked about this happening, and someone else used ChatGPT to write fake news coverage about the event.

Link 2023-03-23 Teaching News Apps with Codespaces: Derek Willis used GitHub Codespaces for the latest data journalism class he taught, and it eliminated the painful process of trying to get students on an assortment of Mac, Windows and Chromebook laptops all to a point where they could start working and learning together.

Link 2023-03-23 mitsua-diffusion-one: "Mitsua Diffusion One is a latent text-to-image diffusion model, which is a successor of Mitsua Diffusion CC0. This model is trained from scratch using only public domain/CC0 or copyright images with permission for use." I've been talking about how much I'd like to try out a "vegan" AI model trained entirely on out-of-copyright images for ages, and here one is! It looks like the training data mainly came from CC0 art gallery collections such as the Metropolitan Museum of Art Open Access.

Link 2023-03-23 ChatGPT plugins: ChatGPT is getting a plugins mechanism, which will allow developers to provide extra capabilities to ChatGPT, like looking up restaurants on OpenTable or fetching data from APIs. This feels like the kind of feature that could obsolete - or launch - a thousand startups. It also makes ChatGPT much more interesting as a general purpose tool, as opposed to something that only works as an interface to a language model.

Link 2023-03-23 ChatGPT Retrieval Plugin: "The ChatGPT Retrieval Plugin repository provides a flexible solution for semantic search and retrieval of personal or organizational documents using natural language queries." How many existing startups were building this I wonder?

Link 2023-03-23 textra: Tiny (432KB) macOS binary CLI tool by Dylan Freedman which produces high quality text extraction from PDFs, images and even audio files using the VisionKit APIs in macOS 13 and higher. It handles handwriting too!

Love the newsletter (as well as the site). A question that is unrelated to this specific article but your process for publishing. Since your posting content on both substack as well as your site, do you run into issues with SEO? I don't think substack lets you set a canonical link

Thanks as always for all the info. When you say you did the plugin tests with ChatGPT, do you mean 3.5 or 4?