Vibe coding and agentic engineering are getting closer than I’d like

Plus updates from Anthropic's Code w/ Claude conference

In this newsletter:

Vibe coding and agentic engineering are getting closer than I’d like

Live blog: Code w/ Claude 2026

Notes on the xAI/Anthropic data center deal

Plus 4 links and 3 quotations and 9 beats

Sponsor message: Make your app Enterprise Ready with SSO, SCIM, RBAC, and more. These features are table stakes for modern SaaS and AI applications. WorkOS provides APIs to ship them fast, so your team can focus on building product, not identity infrastructure. Trusted by OpenAI, Anthropic, Cursor, Vercel, and more.

Vibe coding and agentic engineering are getting closer than I’d like - 2026-05-06

I recently talked with Joseph Ruscio about AI coding tools for Heavybit’s High Leverage podcast: Ep. #9, The AI Coding Paradigm Shift with Simon Willison. Here are some of my highlights, including my disturbing realization that vibe coding and agentic engineering have started to converge in my own work.

One thing I really enjoy about podcasts is that they sometimes push me to think out loud in a way that exposes an idea I’ve not previously been able to put into words.

Vibe coding and agentic engineering are starting to overlap

A few weeks after vibe coding was first coined I published Not all AI-assisted programming is vibe coding (but vibe coding rocks), where I firmly staked out my belief that “vibe coding” is a very different beast from responsible use of AI to write code, which I’ve since started to call agentic engineering.

When Joseph brought up the distinction between the two I had a sudden realization that they’re not nearly as distinct for me as they used to be:

Weirdly though, those things have started to blur for me already, which is quite upsetting.

I thought we had a very clear delineation where vibe coding is the thing where you’re not looking at the code at all. You might not even know how to program. You might be a non-programmer who asks for a thing, and gets a thing, and if the thing works, then great! And if it doesn’t, you tell it that it doesn’t work and cross your fingers.

But at no point are you really caring about the code quality or any of those additional constraints. And my take on vibe coding was that it’s fantastic, provided you understand when it can be used and when it can’t.

A personal tool for you, where if there’s a bug it hurts only you, go ahead!

If you’re building software for other people, vibe coding is grossly irresponsible because it’s other people’s information. Other people get hurt by your stupid bugs. You need to have a higher level than that.

This contrasts with agentic engineering where you are a professional software engineer. You understand security and maintainability and operations and performance and so forth. You’re using these tools to the highest of your own ability. I’m finding the scope of challenges I can take on has gone up by a significant amount because I’ve got the support of these tools.

But I’m still leaning on my 25 years of experience as a software engineer.

The goal is to build high quality production systems: if you’re building lower quality stuff faster, I think that’s bad. I want to build higher quality stuff faster. I want everything I’m building to be better in every way than it was before.

The problem is that as the coding agents get more reliable, I’m not reviewing every line of code that they write anymore, even for my production level stuff.

I know full well that if you ask Claude Code to build a JSON API endpoint that runs a SQL query and outputs the results as JSON, it’s just going to do it right. It’s not going to mess that up. You have it add automated tests, you have it add documentation, you know it’s going to be good.

But I’m not reviewing that code. And now I’ve got that feeling of guilt: if I haven’t reviewed the code, is it really responsible for me to use this in production?

The thing that really helps me is thinking back to when I’ve worked at larger organizations where I’ve been an engineering manager. Other teams are building software that my team depends on.

If another team hands over something and says, “hey, this is the image resize service, here’s how to use it to resize your images”... I’m not going to go and read every line of code that they wrote.

I’m going to look at their documentation and I’m going to use it to resize some images. And then I’m going to start shipping my own features. And if I start running into problems where the image resizer thing appears to have bugs or the performance isn’t good, that’s when I might dig into their Git repositories and see what’s going on. But for the most part I treat that as a semi-black box that I don’t look at until I need to.

I’m starting to treat the agents in the same way. And it still feels uncomfortable, because human beings are accountable for what they do. A team can build a reputation. I can say “I trust that team over there. They built good software in the past. They’re not going to build something rubbish because that affects their professional reputations.”

Claude Code does not have a professional reputation! It can’t take accountability for what it’s done. But it’s been proving itself anyway - time and time again it’s churning out straightforward things and doing them right in the style that I like.

There’s an element of the normalization of deviance here - every time a model turns out to have written the right code without me monitoring it closely there’s a risk that I’ll trust it at the wrong moment in the future and get burned.

The new challenge of evaluating software

It used to be if you found a GitHub repository with a hundred commits and a good readme and automated tests and stuff, you could be pretty sure that the person writing that had put a lot of care and attention into that project.

And now I can knock out a git repository with a hundred commits and a beautiful readme and comprehensive tests of every line of code in half an hour! It looks identical to those projects that have had a great deal of care and attention. Maybe it is as good as them. I don’t know. I can’t tell from looking at it. Even for my ownprojects, I can’t tell.

So I realized what I value more than the quality of the tests and documentation is that I want somebody to have used the thing. If you’ve got a vibe coded thing which you have used every day for the past two weeks, that’s much more valuable to me than something that you’ve just spat out and hardly even exercised.

The bottlenecks have shifted

If you can go from producing 200 lines of code a day to 2,000 lines of code a day, what else breaks? The entire software development lifecycle was, it turns out, designed around the idea that it takes a day to produce a few hundred lines of code. And now it doesn’t.

It’s not just the downstream stuff, it’s the upstream stuff as well. I saw a great talk by Jenny Wen, who’s the design leader at Anthropic, where she said we have all of these design processes that are based around the idea that you need to get the design right - because if you hand it off to the engineers and they spend three months building the wrong thing, that’s catastrophic.

There’s this whole very extensive design process that you put in place because that design results in expensive work. But if it doesn’t take three months to build, maybe the design process can be a whole lot riskier because cost, if you get something wrong, has been reduced so much.

Why I’m still not afraid for my career

When I look at my conversations with the agents, it’s very clear to me that this is moon language for the vast majority of human beings.

There are a whole bunch of reasons I’m not scared that my career as a software engineer is over now that computers can write their own code, partly because these things are amplifiers of existing experience. If you know what you’re doing, you can run so much faster with them. [...]

I’m constantly reminded as I work with these tools how hard the thing that we do is. Producing software is a ferociously difficult thing to do. And you could give me all of the AI tools in the world and what we’re trying to achieve here is still really difficult. [...]

Matthew Yglesias, who’s a political commentator, yesterday tweeted, “Five months in, I think I’ve decided that I don’t want to vibecode — I want professionally managed software companies to use AI coding assistance to make more/better/cheaper software products that they sell to me for money.” And that feels about right to me. I can plumb my house if I watch enough YouTube videos on plumbing. I would rather hire a plumber.

On the threat to SaaS providers of companies rolling their own solutions instead:

I just realized it’s the thing I said earlier about how I only want to use your side project if you’ve used it for a few weeks. The enterprise version of that is I don’t want a CRM unless at least two other giant enterprises have successfully used that CRM for six months. [...] You want solutions that are proven to work before you take a risk on them.

Live blog: Code w/ Claude 2026 - 2026-05-06

I’m at Anthropic’s Code w/ Claude event today. Here’s my live blog of the morning keynote sessions.

Notes on the xAI/Anthropic data center deal - 2026-05-07

There weren’t a lot of big new announcements from Anthropic at yesterday’s Code w/ Claude event, but the biggest by far was the deal they’ve struck with SpaceX/xAI to use “all of the capacity of their Colossus data center”.

As I mentioned in my live blog of the keynote, that’s the one with the particularly bad environmental record. The gas turbines installed to power the facility initially ran without Clean Air Act permits or pollution control devices, which they got away with by classifying them as “temporary”. Credible reports link it to increases in hospital admissions relating to low air quality.

Andy Masley, one of the most prolific voices pushing back against misleading rhetoric about data centers (see The AI water issue is fake and Data center land issues are fake), had this to say about Colossus:

I would simply not run my computing out of this specific data center

I get that Anthropic are severely compute-constrained, but in a world where the very existence of “AI data centers” is a red-hot political issue (see recent news out of Utah for a fresh example), signing up with this particular data center is a really bad look.

There was a lot of initial chatter about how this meant xAI were clearly giving up on their own Grok models, since all of their capacity would be sold to Anthropic instead. That was a misconception - Anthropic are getting Colossus 1, but xAI are keeping their larger Colossus 2 data center for their own work.

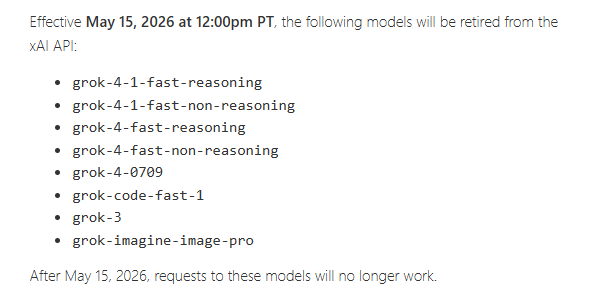

As an interesting side note, the night before the Anthropic announcement, xAI sent out a deprecation notice for Grok 4.1 Fast and several other models providing just two weeks’ notice before shutdown, reported here by @xlr8harder from SpeechMap:

This is terrible @xai. I just spent time and money to migrate to grok 4.1 fast, and you’re disabling it with less than two weeks notice, after releasing it in November, with no migration path to a fast/cheap alternative.

I will never depend on one of your products again.

Here’s SpeechMap’s detailed explanation of how they selected Grok 4.1 Fast for their project in March.

Were xAI serving those models out of Colossus 1?

xAI owner Elon Musk (who previously delighted in calling Anthropic “Misanthropic”) tweeted the following:

By way of background for those who care, I spent a lot of time last week with senior members of the Anthropic team to understand what they do to ensure Claude is good for humanity and was impressed. [...]

After that, I was ok leasing Colossus 1 to Anthropic, as SpaceXAI had already moved training to Colossus 2.

And then shortly afterwards:

Just as SpaceX launches hundreds of satellites for competitors with fair terms and pricing, we will provide compute to AI companies that are taking the right steps to ensure it is good for humanity.

We reserve the right to reclaim the compute if their AI engages in actions that harm humanity.

Presumably the criteria for “harm humanity” are decided by Elon himself. Sounds like a new form of supply chain risk for Anthropic to me!

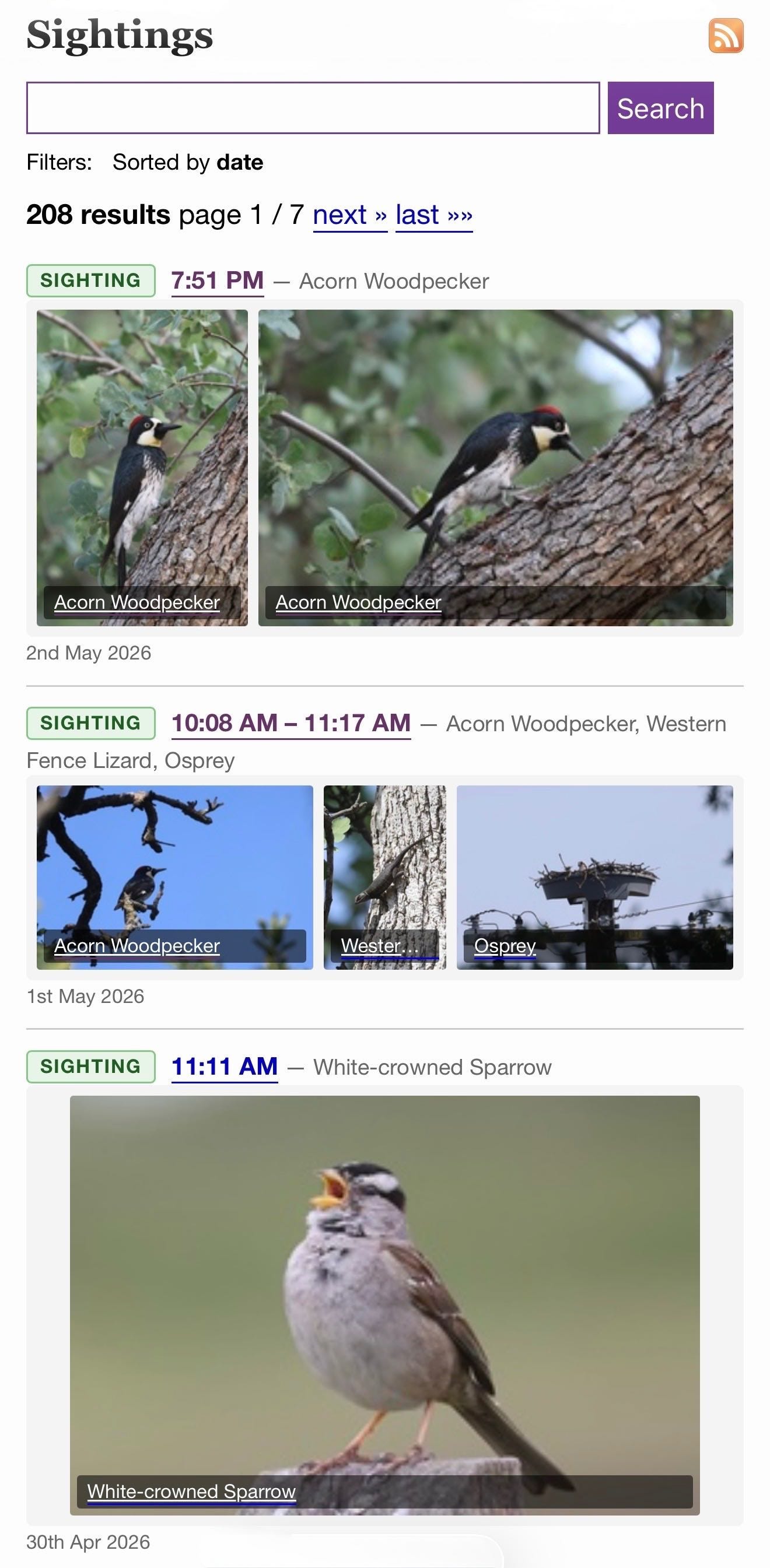

Tool: iNaturalist Sightings

I wanted to see my iNaturalist observations - across two separate accounts - grouped by when they occurred. I’m camping this weekend so I built this entirely on my phone using Claude Code for web.

I started by building an inaturalist-clumper Python CLI for fetching and “clumping” observations - by default clumps use observations within 2 hours and 5km of each other.

Then I setup simonw/inaturalist-clumps as a Git scraping repository to run that tool and record the result to clumps.json.

That JSON file is hosted on GitHub, which means it can be fetched by JavaScript using CORS.

Finally I ran this prompt against my simonw/tools repo:

Build inat-sightings.html - an app that does a fetch() against https://raw.githubusercontent.com/simonw/inaturalist-clumps/refs/heads/main/clumps.json and then displays all of the observations on one page using the https://static.inaturalist.org/photos/538073008/small.jpg small.jpg URLs for the thumbnails - with loading=lazy - but when a thumbnail is clicked showing the large.jpg in an HTML modal. Both small and large should include the common species names if available

Link 2026-05-02 /elsewhere/sightings/:

I have a new camera (a Canon R6 Mark II) so I’m taking a lot more photos of birds. I share my best wildlife photos on iNaturalist, and based on yesterday’s successful prototype I decided to add those to my blog.

I built this feature on my phone using Claude Code for web, as an extension of my beats system for syndicating external content. Here’s the PR and prompt.

As with my other forms of incoming syndicated content sightings show up on the homepage, the date archive pages, and in site search results.

I back-populated over a decade of iNaturalist sightings, which means you that if you search for lemur you’ll see my lemur photos from Madagascar in 2019!

Quote 2026-05-03

We used an automatic classifier which judged sycophancy by looking at whether Claude showed a willingness to push back, maintain positions when challenged, give praise proportional to the merit of ideas, and speak frankly regardless of what a person wants to hear. Most of the time in these situations, Claude expressed no sycophancy—only 9% of conversations included sycophantic behavior (Figure 2). But two domains were exceptions: we saw sycophantic behavior in 38% of conversations focused on spirituality, and 25% of conversations on relationships.

Anthropic, How people ask Claude for personal guidance

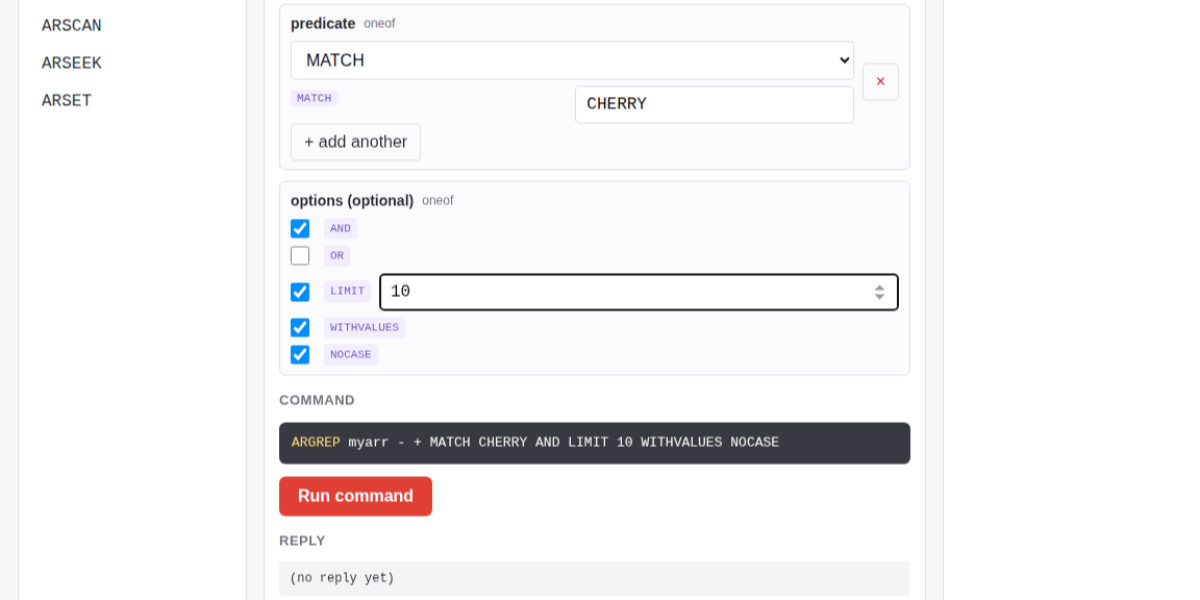

Tool: Redis Array Playground

Salvatore Sanfilippo submitted a PR adding a new data type - arrays - to Redis.

The new commands are ARCOUNT, ARDEL, ARDELRANGE, ARGET, ARGETRANGE, ARGREP, ARINFO, ARINSERT, ARLASTITEMS, ARLEN, ARMGET, ARMSET, ARNEXT, AROP, ARRING, ARSCAN, ARSEEK, ARSET.

The implementation is currently available in a branch, so I had Claude Code for web build this interactive playground for trying out the new commands in a WASM-compiled build of a subset of Redis running in the browser.

The most interesting new command is ARGREP which can run a server-side grep against a range of values in the array using the newly vendored TRE regex library.

Salvatore wrote more about the AI-assisted development process for the array type in Redis array type: short story of a long development.

Research: TRE Python binding — ReDoS robustness demo

If it’s good enough for antirez to add to Redis I figured Ville Laurikari’s TRE regular expression engine was worth exploring in a little more detail.

I had Claude Code build an experimental Python binding (it used ctypes) and try some malicious regular expression attacks against the library. TRE handles those much better than Python’s standard library implementation, thanks mainly to the lack of support for backtracking.

Quote 2026-05-04

[...] Between 2000 and 2024, farmers sold in total a Colorado-sized chunk of land all on their own, 77 times all land on data center property in 2028, and grew more food than ever on what was left. None of this caused any problems for US food access.

And then, in the middle of all this, a farmer in Loudoun County sells a few acres of mediocre hay field to a hyperscaler for ten times its agricultural value, and the response is that we’re running out of farmland.

Andy Masley, pushing back against the “land use” argument against data center construction

Link 2026-05-04 Granite 4.1 3B SVG Pelican Gallery:

IBM released their Granite 4.1 family of LLMs a few days ago. They’re Apache 2.0 licensed and come in 3B, 8B and 30B sizes.

Granite 4.1 LLMs: How They’re Built by Granite team member Yousaf Shah describes the training process in detail.

Unsloth released the unsloth/granite-4.1-3b-GGUF collection of GGUF encoded quantized variants of the 3B model - 21 different model files ranging in size from 1.2GB to 6.34GB.

All 21 of those Unsloth files add up to 51.3GB, which inspired me to finally try an experiment I’ve been wanting to run for ages: prompting “Generate an SVG of a pelican riding a bicycle” against different sized quantized variants of the same model to see what the results would look like.

Honestly, the results are less interesting than I expected. There’s no distinguishable pattern relating quality to size - they’re all pretty terrible!

I’ll likely try this again in the future with a model that’s better at drawing pelicans.

Quote 2026-05-05

So it’s well known that Y Combinator owns some stake in OpenAI. But how big is that stake? This seems like devilishly difficult information to obtain. I asked around and a little birdie who knows several OpenAI investors came back with an answer: Y Combinator owns about 0.6 percent of OpenAI. At OpenAI’s current $852 billion valuation, that’s worth over $5 billion.

John Gruber, Y Combinator’s Stake in OpenAI

Release: llm-echo 0.5a0

New

-o thinking 1option to help test against LLM 0.32a0 and higher.

This plugin provides a fake model called “echo” for LLM which doesn’t run an LLM at all - it’s useful for writing automated tests. You can now do this:

uvx --with llm==0.32a1 --with llm-echo==0.5a0 llm -m echo hi -o thinking 1This will fake a reasoning block to standard error before returning JSON echoing the prompt.

Release: datasette-llm 0.1a7

Mechanism for configuring default options for specific models.

Part of Datasette’s evolving support mechanism for plugins that use LLMs. It’s now possible to configure a model with default options, e.g. to say all enrichment operations should use a specific model with temperature set to 0.5.

Link 2026-05-05 Our AI started a cafe in Stockholm:

Andon Labs previously started an AI-run retail store in San Francisco. Now they’re running a similar experiment in Stockholm, Sweden, only this time it’s a cafe.

These experiments are interesting, and often throw out amusing anecdotes:

During the first week of inventory, Mona ordered 120 eggs even though the café has no stove. When the staff told her they couldn’t cook them, she suggested using the high-speed oven, until they pointed out the eggs would likely explode. She also tried to solve the problem of fresh tomatoes being spoiled too fast by ordering 22.5 kg of canned tomatoes for the fresh sandwiches. The baristas eventually started a “Hall of Shame”, a shelf visible to customers with all the weird things Mona ordered, including 6,000 napkins, 3,000 nitrile gloves, 9L coconut milk, and industrial-sized trash bags.

Where they lose their shine is when these AI managers start wasting the time of human beings who have not opted into the experiment:

She also successfully applied for an outdoor seating permit through the Police e-service, which didn’t require BankID. Her first submission included a sketch she had generated herself, despite having never seen the street outside the café. Unsurprisingly, the Police sent it back for revision. [...]

When she makes a mistake, she often sends multiple emails to suppliers with the subject “EMERGENCY” to cancel or change the order.

I don’t think it’s ethical to run experiments like this that affect real-world systems and steal time from people.

I’m reminded of the incident last year where the AI Village experiment infuriated Rob Pike by sending him unsolicited gratitude emails as an “act of kindness”. That was just an unwanted email - asking suppliers to correct mistakes that were made without a human-in-the-loop or wasting police time with slop diagrams feels a whole lot worse to me.

I think experiments like this need to keep their own human operators in-the-loop for outbound actions that affect other people.

Release: datasette-referrer-policy 0.1

The OpenStreetMap tiles on the Datasette global-power-plants demo weren’t displaying correctly. This turned out to be caused by two bugs.

The first is that the CAPTCHA I added to that site a few weeks ago was triggering for the .json fetch requests used by the map plugin, and since those weren’t HTML the user was not being asked to solve them. Here’s the fix.

The second was that OpenStreetMap quite reasonably block tile requests from sites that use a Referrer-Policy: no-referrerheader.

Datasette does this by default, and I didn’t want to change that default on people without warning - so I had Codex + GPT-5.5 build me a new plugin to help set that header to another value.

Tool: GitHub Repo Stats

One of the things I always look for when evaluating a new GitHub repository is the number of commits it has... but that number isn’t visible on GitHub’s mobile site layout. I built this tool to fix that, using this prompt:

Given a GitHub repo URL or foo/bar repo ID show information about that repo absorbed via wither REST or graphql CORS fetch() including the number of commits in the repo and other useful stats

Example output for simonw/datasette and simonw/llm.

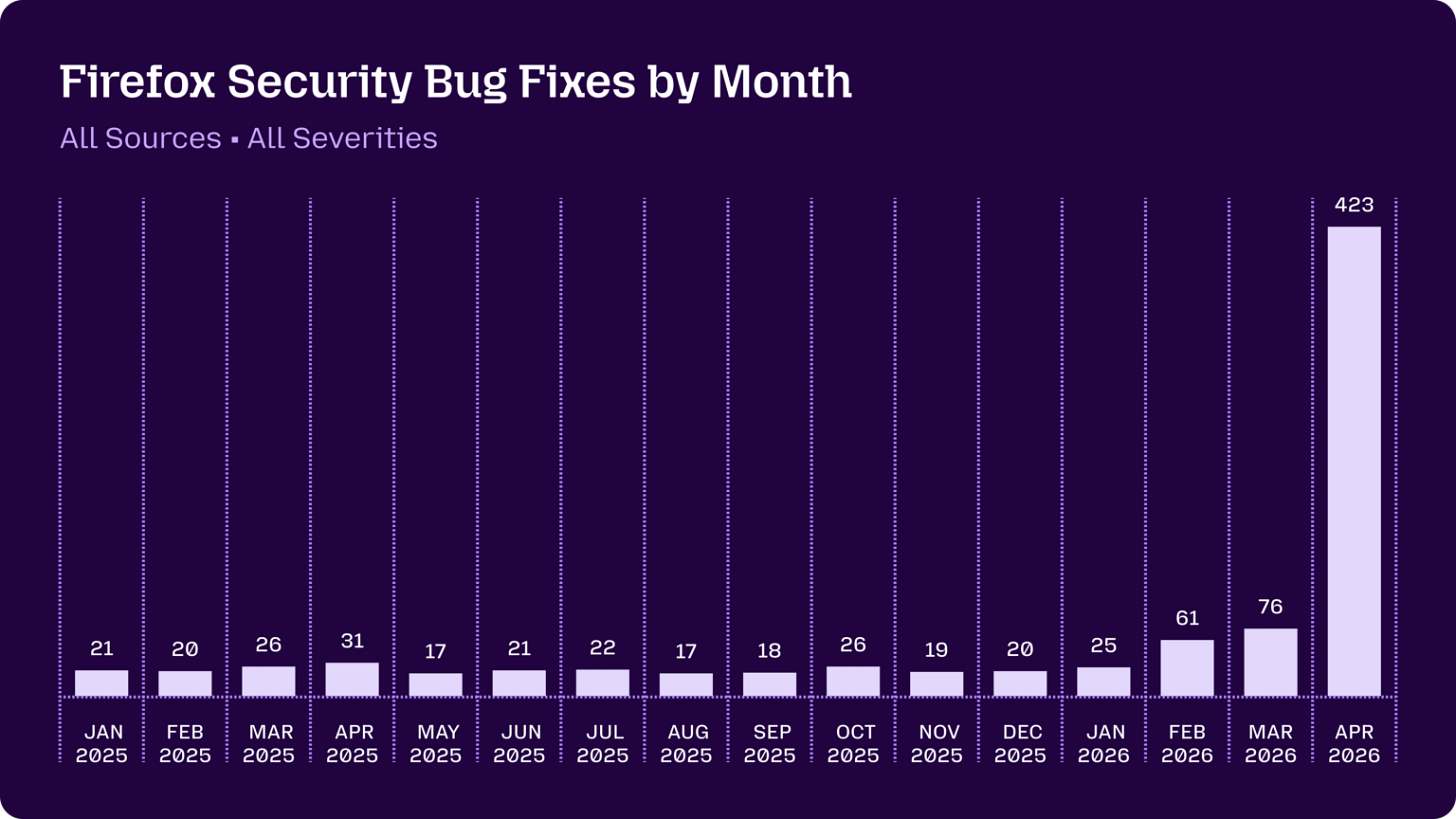

Link 2026-05-07 Behind the Scenes Hardening Firefox with Claude Mythos Preview:

Fascinating, in-depth details on how Mozilla used their access to the Claude Mythos preview to locate and then fix hundreds of vulnerabilities in Firefox:

Suddenly, the bugs are very good

Just a few months ago, AI-generated security bug reports to open source projects were mostly known for being unwanted slop. Dealing with reports that look plausibly correct but are wrong imposes an asymmetric cost on project maintainers: it’s cheap and easy to prompt an LLM to find a “problem” in code, but slow and expensive to respond to it.

It is difficult to overstate how much this dynamic changed for us over a few short months. This was due to a combination of two main factors. First, the models got a lot more capable. Second, we dramatically improved our techniques for harnessing these models — steering them, scaling them, and stacking them to generate large amounts of signal and filter out the noise.

They include some detailed bug descriptions too, including a 20-year old XSLT bug and a 15-year-old bug in the <legend>element.

A lot of the attempts made by the harness were blocked by Firefox’s existing defense-in-depth measures, which is reassuring.

Mozilla were fixing around 20-30 security bugs in Firefox per month through 2025. That jumped to 423 in April.

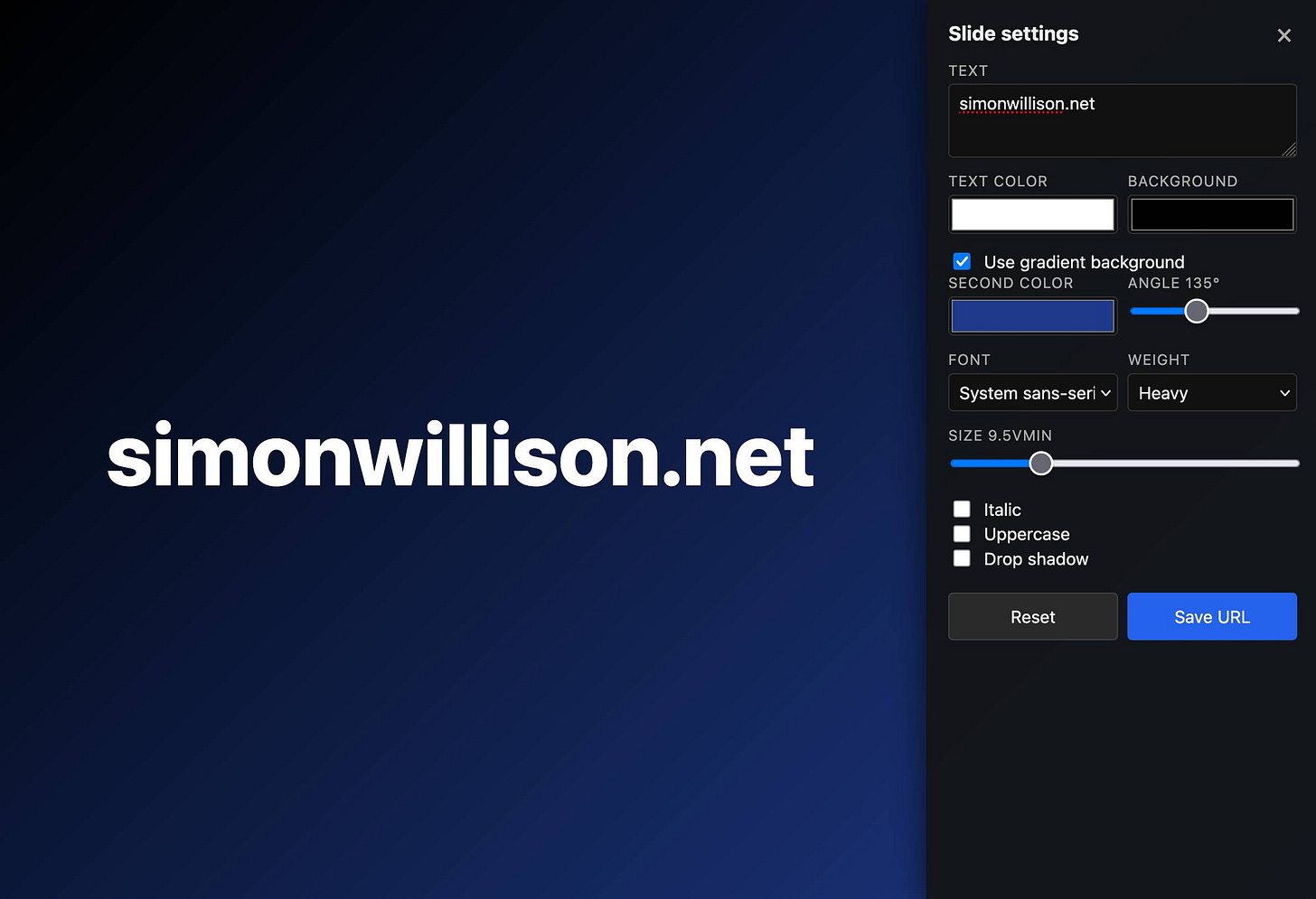

Tool: Big Words

I’m using my vibe coded macOS presentations tool to put together a talk, and I wanted to add a slide with some text on it. The tool only accepts URLs, so I put together a quick page that accepts query string arguments and turns them into a simple slide.

Here’s an example: https://tools.simonwillison.net/big-words?text=simonwillison.net&gradient=1&size=9.5

Double click or double tap the page to access a form for modifying the different options.

Release: llm-gemini 0.31

gemini-3.1-flash-liteis no longer a preview.

Here’s my write-up of the Gemini 3.1 Flash-Lite Preview model back in March. I don’t believe this new non-preview model has changed since then.

If you find this newsletter useful, please consider sponsoring me via GitHub. $10/month and higher sponsors get a monthly newsletter with my summary of the most important trends of the past 30 days - here are previews from January and February and March.

The Jenny Wen point is the one that keeps rattling around in my head. We built entire professional disciplines around the assumption that implementation is expensive. Design reviews, sprint planning, specification documents, architecture committees. All of them exist because building the wrong thing used to cost months.

If building costs hours instead of months, a lot of that upstream apparatus becomes overhead rather than insurance. The bottleneck moves from "can we build it" to "should we build it" and those are very different organisational muscles.

The evaluation point is equally interesting. Simon's instinct that usage matters more than code quality as a trust signal is basically the shift from inspection goods to experience goods. You cannot tell from looking at the code whether it is good. You can tell from running it for two weeks. That changes how software earns trust, and it probably changes how open source reputation works too. Stars and commit counts meant something when they implied human attention per commit.

I have been designing and coding for 20 years and this new world has made it a lot more possible for me to work through and try my ideas more quickly and see what sticks.

I'm about to release my first Mac app and have had a very similar feeling, "If this code is going to run on someone else's machine, I have to make sure it's right." I know how it works throughout all the 'agentic engineering' I did, but I don't know every line. I'm currently going through a process of reviewing every line of code (I have a spreadsheet!) and making sure I understand what's going on. I make edits along the way, nothing substantial but adjustments, improvements, *legibility* type changes so the code is *good* (read legible).

As much as I am becoming more and more trusting of these tools, I have a very heavy feeling that I cannot release this until I know every inch of it myself.