LLM 0.13: The annotated release notes

And was that George Carlin "AI special" actually written by AI?

In this newsletter:

LLM 0.13: The annotated release notes

Weeknotes: datasette-test, datasette-build, PSF board retreat

Plus 14 links and 4 quotations and 1 TIL

LLM 0.13: The annotated release notes - 2024-01-26

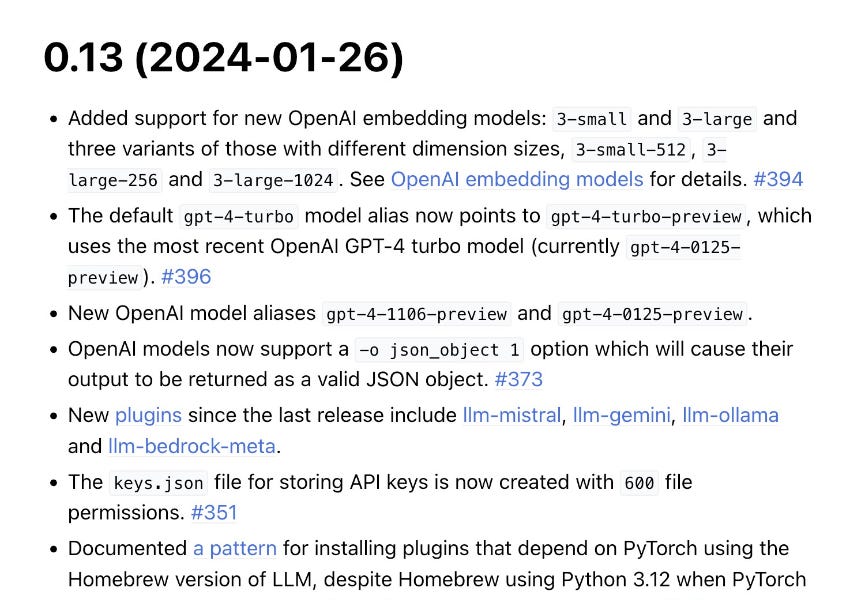

I just released LLM 0.13, the latest version of my LLM command-line tool for working with Large Language Models - both via APIs and running models locally using plugins.

Here are the annotated release notes for the new version.

Added support for new OpenAI embedding models:

3-smalland3-largeand three variants of those with different dimension sizes,3-small-512,3-large-256and3-large-1024. See OpenAI embedding models for details. #394

The original inspiration for shipping a new release was OpenAI's announcement of new models yesterday: New embedding models and API updates.

I wrote a guide to embeddings in Embeddings: What they are and why they matter. Until recently the only available OpenAI embedding model was ada-002 - released in December 2022 and now feeling a little bit old in the tooth.

The new 3-small model is similar to ada-002 but massively less expensive (a fifth of the price) and with higher benchmark scores.

3-large has even higher benchmark, but also produces much bigger vectors. Where ada-002 and 3-small produce 1536-dimensional vectors, 3-large produces 3072 dimensions!

Each dimension corresponds to a floating point number in the array of numbers produced when you embed a piece of content. The more numbers, the more storage space needed for those vectors and the longer any cosine-similarity calculations will take against them.

Here's where things get really interesting though: since people often want to trade quality for smaller vector size, OpenAI now support a way of having their models return much smaller vectors.

LLM doesn't yet have a mechanism for passing options to embedding models (unlike language models which can take -o setting value options), but I still wanted to make the new smaller sizes available.

That's why I included 3-small-512, 3-large-256 and 3-large-1024: those are variants of the core models hard-coded to the specified vector size.

In the future I'd like to support options for embedding models, but this is a useful stop-gap.

The default

gpt-4-turbomodel alias now points togpt-4-turbo-preview, which uses the most recent OpenAI GPT-4 turbo model (currentlygpt-4-0125-preview). #396

Also announced yesterday - gpt-4-0125-preview is the latest version of the GPT-4 model which, according to OpenAI, "completes tasks like code generation more thoroughly than the previous preview model and is intended to reduce cases of “laziness” where the model doesn’t complete a task".

This is technically a breaking change - the gpt-4-turbo LLM alias used to point to the older model, but now points to OpenAI's gpt-4-turbo-preview alias which in turn points to the latest model.

New OpenAI model aliases

gpt-4-1106-previewandgpt-4-0125-preview.

These aliases let you call those models explicitly:

llm -m gpt-4-0125-preview 'Write a lot of code without being lazy'

OpenAI models now support a

-o json_object 1option which will cause their output to be returned as a valid JSON object. #373

This is a fun feature, which uses an OpenAI option that claims to guarantee valid JSON output.

Weirdly you have to include the word "json" in your prompt when using this or OpenAI will return an error!

llm -m gpt-4-turbo \

'3 names and short bios for pet pelicans in JSON' \

-o json_object 1That returned the following for me just now:

{

"pelicans": [

{

"name": "Gus",

"bio": "Gus is a curious young pelican with an insatiable appetite for adventure. He's known amongst the dockworkers for playfully snatching sunglasses. Gus spends his days exploring the marina and is particularly fond of performing aerial tricks for treats."

},

{

"name": "Sophie",

"bio": "Sophie is a graceful pelican with a gentle demeanor. She's become somewhat of a local celebrity at the beach, often seen meticulously preening her feathers or posing patiently for tourists' photos. Sophie has a special spot where she likes to watch the sunset each evening."

},

{

"name": "Captain Beaky",

"bio": "Captain Beaky is the unofficial overseer of the bay, with a stern yet endearing presence. As a seasoned veteran of the coastal skies, he enjoys leading his flock on fishing expeditions and is always the first to spot the fishing boats returning to the harbor. He's respected by both his pelican peers and the fishermen alike."

}

]

}The JSON schema it uses is entirely made up. You can prompt it with an example schema and it will probably stick to it.

New plugins since the last release include llm-mistral, llm-gemini, llm-ollama and llm-bedrock-meta.

I wrote the first two, but llm-ollama is by Sergey Alexandrov and llm-bedrock-meta is by Fabian Labat. My plugin writing tutorial is starting to pay off!

The

keys.jsonfile for storing API keys is now created with600file permissions. #351

A neat suggestion from Christopher Bare.

LLM is packaged for Homebrew. The Homebrew package upgraded to Python 3.12 a while ago, which caused surprising problems because it turned out PyTorch - a dependency of some LLM plugins - doesn't have a stable build out for 3.12 yet.

Christian Bush shared a workaround in an LLM issue thread, which I've now added to the documentation.

Underlying OpenAI Python library has been upgraded to

>1.0. It is possible this could cause compatibility issues with LLM plugins that also depend on that library. #325

This was the bulk of the work. OpenAI released their 1.0 Python library a couple of months ago and it had a large number of breaking changes compared to the previous release.

At the time I pinned LLM to the previous version to paper over the breaks, but this meant you could not install LLM in the same environment as some other library that needed the more recent OpenAI version.

There were a lot of changes! You can find a blow by blow account of the upgrade in my pull request that bundled the work.

Arrow keys now work inside the

llm chatcommand. #376

The recipe for doing this is so weird:

import readline

readline.parse_and_bind("\\e[D: backward-char")

readline.parse_and_bind("\\e[C: forward-char")I asked on Mastodon if anyone knows of a less obscure solution, but it looks like that might be the best we can do!

LLM_OPENAI_SHOW_RESPONSES=1environment variable now outputs much more detailed information about the HTTP request and response made to OpenAI (and OpenAI-compatible) APIs. #404

This feature worked prior to the OpenAI >1.0 upgrade by tapping in to some requests internals. OpenAI dropped requests for httpx so I had to rebuild this feature from scratch.

I ended up getting a TIL out of it: Logging OpenAI API requests and responses using HTTPX.

Dropped support for Python 3.7.

I wanted to stop seeing a pkg_resources related warning, which meant switching to Python 3.8's importlib.medata. Python 3.7 hit end-of-life for support back in June 2023 so I think this is an OK change to make.

Link 2024-01-26 Did an AI write that hour-long “George Carlin” special? I’m not convinced.

Two weeks ago "Dudesy", a comedy podcast which claims to be controlled and written by an AI, released an extremely poor taste hour long YouTube video called "George Carlin: I’m Glad I’m Dead". They used voice cloning to produce a stand-up comedy set featuring the late George Carlin, claiming to also use AI to write all of the content after training it on everything in the Carlin back catalog.

Unsurprisingly this has resulted in a massive amount of angry coverage, including from Carlin's own daughter (the Carlin estate have filed a lawsuit). Resurrecting people without their permission is clearly abhorrent.

But... did AI even write this? The author of this piece, Kyle Orland, started digging in.

It turns out the Dudesy podcast has been running with this premise since it launched in early 2022 - long before any LLM was capable of producing a well-crafted joke. The structure of the Carlin set goes way beyond anything I've seen from even GPT-4. And in a follow-up podcast episode, Dudesy co-star Chad Kultgen gave an O. J. Simpson-style "if I did it" semi-confession that described a much more likely authorship process.

I think this is a case of a human-pretending-to-be-an-AI - an interesting twist, given that the story started out being about an-AI-imitating-a-human.

I consulted with Kyle on this piece, and got a couple of neat quotes in there:

"Either they have genuinely trained a custom model that can generate jokes better than any model produced by any other AI researcher in the world... or they're still doing the same bit they started back in 2022"

"The real story here is… everyone is ready to believe that AI can do things, even if it can't. In this case, it's pretty clear what's going on if you look at the wider context of the show in question. But anyone without that context, [a viewer] is much more likely to believe that the whole thing was AI-generated… thanks to the massive ramp up in the quality of AI output we have seen in the past 12 months."

Weeknotes: datasette-test, datasette-build, PSF board retreat - 2024-01-21

I wrote about Page caching and custom templates in my last weeknotes. This week I wrapped up that work, modifying datasette-edit-templates to be compatible with the jinja2_environment_from_request() plugin hook. This means you can edit templates directly in Datasette itself and have those served either for the full instance or just for the instance when served from a specific domain (the Datasette Cloud case).

Testing plugins with Playwright

As Datasette 1.0 draws closer, I've started thinking about plugin compatibility. This is heavily inspired by my work on Datasette Cloud, which has been running the latest Datasette alphas for several months.

I spotted that datasette-cluster-map wasn't working correctly on Datasette Cloud, as it hadn't been upgraded to account for JSON API changes in Datasette 1.0.

datasette-cluster-map 0.18 fixed that, while continuing to work with previous versions of Datasette. More importantly, it introduced Playwright tests to exercise the plugin in a real Chromium browser running in GitHub Actions.

I've been wanting to establish a good pattern for this for a while, since a lot of Datasette plugins include JavaScript behaviour that warrants browser automation testing.

Alex Garcia figured this out for datasette-comments - inspired by his code I wrote up a TIL on Writing Playwright tests for a Datasette Plugin which I've now also used in datasette-search-all.

datasette-test

datasette-test is a new library that provides testing utilities for Datasette plugins. So far it offers two:

from datasette_test import Datasette

import pytest

@pytest.mark.asyncio

async def test_datasette():

ds = Datasette(plugin_config={"my-plugin": {"config": "goes here"})This datasette_test.Datasette class is a subclass of Datasette which helps write tests that work against both Datasette <1.0 and Datasette >=1.0a8 (releasing shortly). The way plugin configuration works is changing, and this plugin_config= parameter papers over that difference for plugin tests.

The other utility is a wait_until_responds("http://localhost:8001") function. Thes can be used to wait until a server has started, useful for testing with Playwright. I extracted this from Alex's datasette-comments tests.

datasette-build

So far this is just the skeleton of a new tool. I plan for datasette-build to offer comprehensive support for converting a directory full of static data files - JSON, TSV, CSV and more - into a SQLite database, and eventually to other database backends as well.

So far it's pretty minimal, but my goal is to use plugins to provide optional support for further formats, such as GeoJSON or Parquet or even .xlsx.

I really like using GitHub to keep smaller (less than 1GB) datasets under version control. My plan is for datasette-build to support that pattern, making it easy to load version-controlled data files into a SQLite database you can then query directly.

PSF board in-person meeting

I spent the last two days of this week at the annual Python Software Foundation in-person board meeting. It's been fantastic catching up with the other board members over more than just a Zoom connection, and we had a very thorough two days figuring out strategy for the next year and beyond.

Blog entries

Publish Python packages to PyPI with a python-lib cookiecutter template and GitHub Actions

What I should have said about the term Artificial Intelligence

Releases

datasette-edit-templates 0.4.3 - 2024-01-17

Plugin allowing Datasette templates to be edited within Datasettedatasette-test 0.2 - 2024-01-16

Utilities to help write tests for Datasette plugins and applicationsdatasette-cluster-map 0.18.1 - 2024-01-16

Datasette plugin that shows a map for any data with latitude/longitude columnsdatasette-build 0.1a0 - 2024-01-15

Build a directory full of files into a SQLite databasedatasette-auth-tokens 0.4a7 - 2024-01-13

Datasette plugin for authenticating access using API tokensdatasette-search-all 1.1.2 - 2024-01-08

Datasette plugin for searching all searchable tables at once

TILs

Publish releases to PyPI from GitHub Actions without a password or token - 2024-01-15

Using pprint() to print dictionaries while preserving their key order - 2024-01-15

Using expect() to wait for a selector to match multiple items - 2024-01-13

literalinclude with markers for showing code in documentation - 2024-01-10

Writing Playwright tests for a Datasette Plugin - 2024-01-09

How to get Cloudflare to cache HTML - 2024-01-09

Running Varnish on Fly - 2024-01-08

Quote 2024-01-18

Tools are the things we build that we don't ship - but that very much affect the artifact that we develop.

It can be tempting to either shy away from developing tooling entirely or (in larger organizations) to dedicate an entire organization to it.

In my experience, tooling should be built by those using it.

This is especially true for tools that improve the artifact by improving understanding: the best time to develop a debugger is when debugging!

Link 2024-01-19 AWS Fixes Data Exfiltration Attack Angle in Amazon Q for Business:

An indirect prompt injection (where the AWS Q bot consumes malicious instructions) could result in Q outputting a markdown link to a malicious site that exfiltrated the previous chat history in a query string.

Amazon fixed it by preventing links from being output at all - apparently Microsoft 365 Chat uses the same mitigation.

Link 2024-01-20 DSF calls for applicants for a Django Fellow:

The Django Software Foundation employs contractors to manage code reviews and releases, responsibly handle security issues, coach new contributors, triage tickets and more.

This is the Django Fellows program, which is now ten years old and has proven enormously impactful.

Mariusz Felisiak is moving on after five years and the DSF are calling for new applicants, open to anywhere in the world.

Quote 2024-01-20

And now, in Anno Domini 2024, Google has lost its edge in search. There are plenty of things it can’t find. There are compelling alternatives. To me this feels like a big inflection point, because around the stumbling feet of the Big Tech dinosaurs, the Web’s mammals, agile and flexible, still scurry. They exhibit creative energy and strongly-flavored voices, and those voices still sometimes find and reinforce each other without being sock puppets of shareholder-value-focused private empires.

Link 2024-01-21 NYT Flash-based visualizations work again:

The New York Times are using the open source Ruffle Flash emulator - built using Rust, compiled to WebAssembly - to get their old archived data visualization interactives working again.

Quote 2024-01-22

We estimate the supply-side value of widely-used OSS is $4.15 billion, but that the demand-side value is much larger at $8.8 trillion. We find that firms would need to spend 3.5 times more on software than they currently do if OSS did not exist. [...] Further, 96% of the demand-side value is created by only 5% of OSS developers.

The Value of Open Source Software, Harvard Business School Strategy Unit

Link 2024-01-22 Python packaging must be getting better - a datapoint:

Luke Plant reports on a recent project he developed on Linux using a requirements.txt file and some complex binary dependencies - Qt5 and VTK - and when he tried to run it on Windows... it worked! No modifications required.

I think Python's packaging system has never been more effective... provided you know how to use it. The learning curve is still too high, which I think accounts for the bulk of complaints about it today.

Link 2024-01-23 Prompt Lookup Decoding:

Really neat LLM optimization trick by Apoorv Saxena, who observed that it's common for sequences of tokens in LLM input to be reflected by the output - snippets included in a summarization, for example.

Apoorv's code performs a simple search for such prefixes and uses them to populate a set of suggested candidate IDs during LLM token generation.

The result appears to provide around a 2.4x speed-up in generating outputs!

Link 2024-01-23 The Open Source Sustainability Crisis:

Chad Whitacre: "What is Open Source sustainability? Why do I say it is in crisis? My answers are that sustainability is when people are getting paid without jumping through hoops, and we’re in a crisis because people aren’t and they’re burning out."

I really like Chad's focus on "jumping through hoops" in this piece. It's possible to build a financially sustainable project today, but it requires picking one or more activities that aren't directly aligned with working on the core project: raising VC and starting a company, building a hosted SaaS platform and becoming a sysadmin, publishing books and courses and becoming a content author.

The dream is that open source maintainers can invest all of their effort in their projects and make a good living from that work.

Quote 2024-01-24

Find a level of abstraction that works for what you need to do. When you have trouble there, look beneath that abstraction. You won’t be seeing how things really work, you’ll be seeing a lower-level abstraction that could be helpful. Sometimes what you need will be an abstraction one level up. Is your Python loop too slow? Perhaps you need a C loop. Or perhaps you need numpy array operations.

You (probably) don’t need to learn C.

Link 2024-01-24 Google Research: Lumiere:

The latest in text-to-video from Google Research, described as "a text-to-video diffusion model designed for synthesizing videos that portray realistic, diverse and coherent motion".

Most existing text-to-video models generate keyframes and then use other models to fill in the gaps, which frequently leads to a lack of coherency. Lumiere "generates the full temporal duration of the video at once", which avoids this problem.

Disappointingly but unsurprisingly the paper doesn't go into much detail on the training data, beyond stating "We train our T2V model on a dataset containing 30M videos along with their text caption. The videos are 80 frames long at 16 fps (5 seconds)".

The examples of "stylized generation" which combine a text prompt with a single reference image for style are particularly impressive.

Link 2024-01-24 Django Chat: Datasette, LLMs, and Django:

I'm the guest on the latest episode of the Django Chat podcast. We talked about Datasette, LLMs, the New York Times OpenAI lawsuit, the Python Software Foundation and all sorts of other topics.

Link 2024-01-25 Fairly Trained launches certification for generative AI models that respect creators’ rights:

I've been using the term "vegan models" for a while to describe machine learning models that have been trained in a way that avoids using unlicensed, copyrighted data. Fairly Trained is a new non-profit initiative that aims to encourage such models through a "certification" stamp of approval.

The team is lead by Ed Newton-Rex, who was previously VP of Audio at Stability AI before leaving over ethical concerns with the way models were being trained.

Link 2024-01-25 Inside .git:

This single diagram filled in all sorts of gaps in my mental model of how git actually works under the hood.

Link 2024-01-25 iOS 17.4 Introduces Alternative App Marketplaces With No Commission in EU:

The most exciting detail tucked away in this story about new EU policies from iOS 17.4 onwards: "Apple is giving app developers in the EU access to NFC and allowing for alternative browser engines, so WebKit will not be required for third-party browser apps."

Finally, browser engine competition on iOS! I really hope this results in a future worldwide policy allowing such engines.

Link 2024-01-25 Portable EPUBs:

Will Crichton digs into the reasons people still prefer PDF over HTML as a format for sharing digital documents, concluding that the key issues are that HTML documents are not fully self-contained and may not be rendered consistently.

He proposes "Portable EPUBs" as the solution, defining a subset of the existing EPUB standard with some additional restrictions around avoiding loading extra assets over a network, sticking to a smaller (as-yet undefined) subset of HTML and encouraging interactive components to be built using self-contained Web Components.

Will also built his own lightweight EPUB reading system, called Bene - which is used to render this Portable EPUBs article. It provides a "download" link in the top right which produces the .epub file itself.

There's a lot to like here. I'm constantly infuriated at the number of documents out there that are PDFs but really should be web pages (academic papers are a particularly bad example here), so I'm very excited by any initiatives that might help push things in the other direction.

Link 2024-01-26 Exploring codespaces as temporary dev containers:

DJ Adams shows how to use GitHub Codespaces without interacting with their web UI at all: you can run "gh codespace create --repo ..." to create a new instance, then SSH directly into it using "gh codespace ssh --codespace codespacename".

This turns Codespaces into an extremely convenient way to spin up a scratch on-demand Linux container where you pay for just the time that the machine spends running.

TIL 2024-01-26 Logging OpenAI API requests and responses using HTTPX:

My LLM tool has a feature where you can set a LLM_OPENAI_SHOW_RESPONSES environment variable to see full debug level details of any HTTP requests it makes to the OpenAI APIs. …

Simon, what is the difference between your llm tool, and using for example ollama with their API?