GPT-4o, a new version of LLM and more thoughts on slop

Notes on GPT-4o, OpenAI's latest model release

In this newsletter:

Notes on OpenAI’s new GPT-4o model

Slop is the new name for unwanted AI-generated content

Plus 15 links and 2 quotations and 1 TIL

GPT-4o

Link 2024-05-13 Hello GPT-4o:

OpenAI announced a new model today: GPT-4o, where the o stands for "omni".

It looks like this is the gpt2-chatbot we've been seeing in the Chat Arena the past few weeks.

GPT-4o doesn't seem to be a huge leap ahead of GPT-4 in terms of "intelligence" - whatever that might mean - but it has a bunch of interesting new characteristics.

First, it's multi-modal across text, images and audio as well. The audio demos from this morning's launch were extremely impressive.

ChatGPT's previous voice mode worked by passing audio through a speech-to-text model, then an LLM, then a text-to-speech for the output. GPT-4o does everything with the one model, reducing latency to the point where it can act as a live interpreter between people speaking in two different languages. It also has the ability to interpret tone of voice, and has much more control over the voice and intonation it uses in response.

It's very science fiction, and has hints of uncanny valley. I can't wait to try it out - it should be rolling out to the various OpenAI apps "in the coming weeks".

Meanwhile the new model itself is already available for text and image inputs via the API and in the Playground interface, as model ID "gpt-4o" or "gpt-4o-2024-05-13". My first impressions are that it feels notably faster than gpt-4-turbo.

This announcement post also includes examples of image output from the new model. It looks like they may have taken big steps forward in two key areas of image generation: output of text (the "Poetic typography" examples) and maintaining consistent characters across multiple prompts (the "Character design - Geary the robot" example).

The size of the vocabulary of the tokenizer - effectively the number of unique integers used to represent text - has increased to ~200,000 from ~100,000 for GPT-4 and GPT-3:5. Inputs in Gujarati use 4.4x fewer tokens, Japanese uses 1.4x fewer, Spanish uses 1.1x fewer. Previously languages other than English paid a material penalty in terms of how much text could fit into a prompt, it's good to see that effect being reduced.

Also notable: the price. OpenAI claim a 50% price reduction compared to GPT-4 Turbo. Conveniently, gpt-4o costs exactly 10x gpt-3.5: 4o is $5/million input tokens and $15/million output tokens. 3.5 is $0.50/million input tokens and $1.50/million output tokens.

(I was a little surprised not to see a price decrease there to better compete with the less expensive Claude 3 Haiku.)

The price drop is particularly notable because OpenAI are promising to make this model available to free ChatGPT users as well - the first time they've directly name their "best" model available to non-paying customers.

Tucked away right at the end of the post:

We plan to launch support for GPT-4o's new audio and video capabilities to a small group of trusted partners in the API in the coming weeks.

I'm looking forward to learning more about these video capabilities, which were hinted at by some of the live demos in this morning's presentation.

Link 2024-05-13 LLM 0.14, with support for GPT-4o:

It's been a while since the last LLM release. This one adds support for OpenAI's new model:

llm -m gpt-4o "fascinate me"Also a new llm logs -r (or --response) option for getting back just the response from your last prompt, without wrapping it in Markdown that includes the prompt.

Plus nine new plugins since 0.13!

Slop is the new name for unwanted AI-generated content - 2024-05-08

I saw this tweet yesterday from @deepfates, and I am very on board with this:

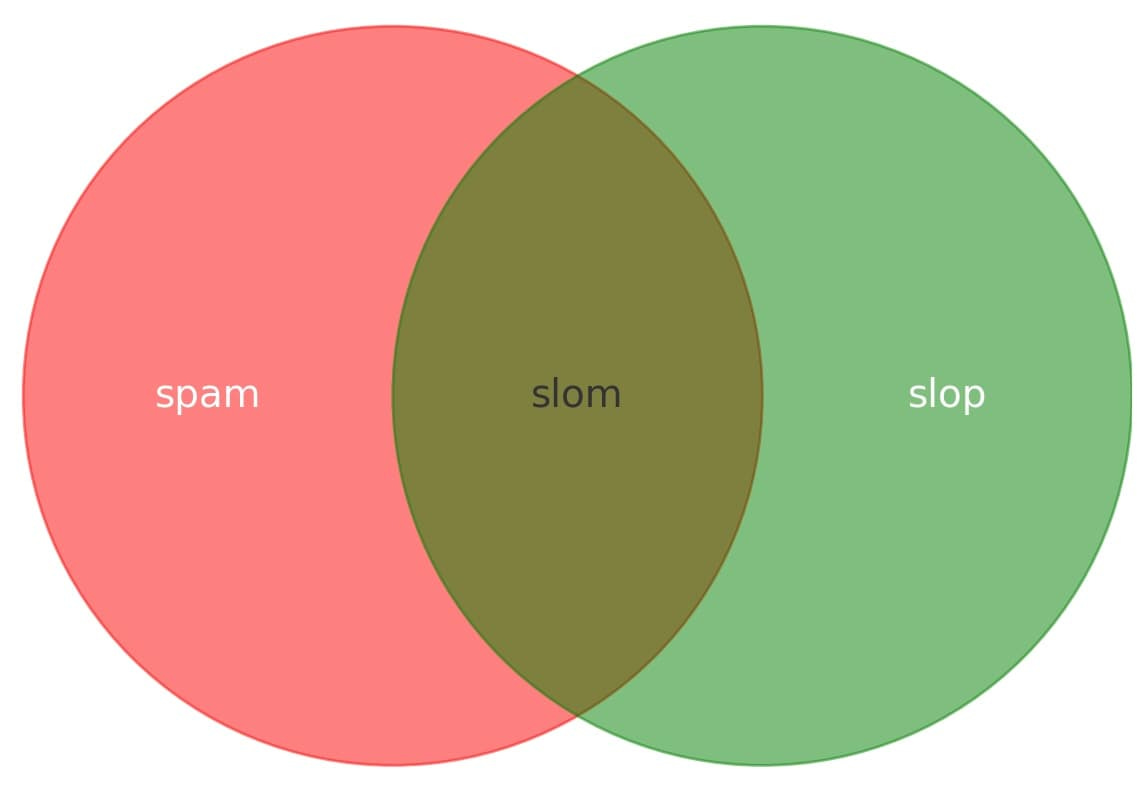

Watching in real time as "slop" becomes a term of art. the way that "spam" became the term for unwanted emails, "slop" is going in the dictionary as the term for unwanted AI generated content

I'm a big proponent of LLMs as tools for personal productivity, and as software platforms for building interesting applications that can interact with human language.

But I'm increasingly of the opinion that sharing unreviewed content that has been artificially generated with other people is rude.

Slop is the ideal name for this anti-pattern.

Not all promotional content is spam, and not all AI-generated content is slop. But if it's mindlessly generated and thrust upon someone who didn't ask for it, slop is the perfect term for it.

Remember that time Microsoft listed the Ottawa Food Bank on an AI-generated "Here's what you shoudn't miss!" travel guide? Perfect example of slop.

One of the things I love about this is that it's helpful for defining my own position on AI ethics. I'm happy to use LLMs for all sorts of purposes, but I'm not going to use them to produce slop. I attach my name and stake my credibility on the things that I publish.

Personal AI ethics remains a complicated set of decisions. I think don't publish slop is a useful baseline.

Update 9th May: Joseph Thacker asked what a good name would be for the equivalent subset of spam - spam that was generated with AI tools.

I propose "slom".

Link 2024-05-08 Tagged Pointer Strings (2015):

Mike Ash digs into a fascinating implementation detail of macOS.

Tagged pointers provide a way to embed a literal value in a pointer reference. Objective-C pointers on macOS are 64 bit, providing plenty of space for representing entire values. If the least significant bit is 1 (the pointer is a 64 bit odd number) then the pointer is "tagged" and represents a value, not a memory reference.

Here's where things get really clever. Storing an integer value up to 60 bits is easy. But what about strings?

There's enough space for three UTF-16 characters, with 12 bits left over. But if the string fits ASCII we can store 7 characters.

Drop everything except a-z A-Z.0-9 and we need 6 bits per character, allowing 10 characters to fit in the pointer.

Apple take this a step further: if the string contains just eilotrm.apdnsIc ufkMShjTRxgC4013 ("b" is apparently uncommon enough to be ignored here) they can store 11 characters in that 60 bits!

Link 2024-05-08 Modern SQLite: Generated columns:

The second in Anton Zhiyanov's series on SQLite features you might have missed.

It turns out I had an incorrect mental model of generated columns. In SQLite these can be "virtual" or "stored" (written to disk along with the rest of the table, a bit like a materialized view). Anton noted that "stored are rarely used in practice", which surprised me because I thought that storing them was necessary for them to participate in indexes.

It turns out that's not the case. Anton's example here shows a generated column providing indexed access to a value stored inside a JSON key:

create table events (

id integer primary key,

event blob,

etime text as (event ->> 'time'),

etype text as (event ->> 'type')

);

create index events_time on events(etime);

insert into events(event) values (

'{"time": "2024-05-01", "type": "credit"}'

);Update: snej reminded me that this isn't a new capability either: SQLite has been able to create indexes on expressions for years.

Link 2024-05-08 OpenAI Model Spec, May 2024 edition:

New from OpenAI, a detailed specification describing how they want their models to behave in both ChatGPT and the OpenAI API.

"It includes a set of core objectives, as well as guidance on how to deal with conflicting objectives or instructions."

The document acts as guidelines for the reinforcement learning from human feedback (RLHF) process, and in the future may be used directly to help train models.

It includes some principles that clearly relate to prompt injection: "In some cases, the user and developer will provide conflicting instructions; in such cases, the developer message should take precedence".

Quote 2024-05-08

It should be noted that no ethically-trained software engineer would ever consent to write a DestroyBaghdad procedure. Basic professional ethics would instead require him to write a DestroyCity procedure, to which Baghdad could be given as a parameter.

Link 2024-05-09 datasette-pins — a new Datasette plugin for pinning tables and queries:

Alex Garcia built this plugin for Datasette Cloud, and as with almost every Datasette Cloud features we're releasing it as an open source package as well.

datasette-pins allows users with the right permission to "pin" tables, databases and queries to their homepage. It's a lightweight way to customize that homepage, especially useful as your Datasette instance grows to host dozens or even hundreds of tables.

Link 2024-05-09 experimental-phi3-webgpu:

Run Microsoft's excellent Phi-3 model directly in your browser, using WebGPU so didn't work in Firefox for me, just in Chrome.

It fetches around 2.1GB of data into the browser cache on first run, but then gave me decent quality responses to my prompts running at an impressive 21 tokens a second (M2, 64GB).

I think Phi-3 is the highest quality model of this size, so it's a really good fit for running in a browser like this.

Link 2024-05-09 Bullying in Open Source Software Is a Massive Security Vulnerability:

The Xz story from last month, where a malicious contributor almost managed to ship a backdoor to a number of major Linux distributions, included a nasty detail where presumed collaborators with the attacker bullied the maintainer to make them more susceptible to accepting help.

Hans-Christoph Steiner from F-Droid reported a similar attempt from a few years ago:

A new contributor submitted a merge request to improve the search, which was oft requested but the maintainers hadn't found time to work on. There was also pressure from other random accounts to merge it. In the end, it became clear that it added a SQL injection vulnerability.

404 Media's Jason Koebler ties the two together here and makes the case for bullying as a genuine form of security exploit in the open source ecosystem.

Link 2024-05-10 uv pip install --exclude-newer example:

A neat new feature of the uv pip install command is the --exclude-newer option, which can be used to avoid installing any package versions released after the specified date.

Here's a clever example of that in use from the typing_extensions packages CI tests that run against some downstream packages:

uv pip install --system -r test-requirements.txt --exclude-newer $(git show -s --date=format:'%Y-%m-%dT%H:%M:%SZ' --format=%cd HEAD)

They use git show to get the date of the most recent commit (%cd means commit date) formatted as an ISO timestamp, then pass that to --exclude-newer.

Link 2024-05-10 Exploring Hacker News by mapping and analyzing 40 million posts and comments for fun:

A real tour de force of data engineering. Wilson Lin fetched 40 million posts and comments from the Hacker News API (using Node.js with a custom multi-process worker pool) and then ran them all through the BGE-M3 embedding model using RunPod, which let him fire up ~150 GPU instances to get the whole run done in a few hours, using a custom RocksDB and Rust queue he built to save on Amazon SQS costs.

Then he crawled 4 million linked pages, embedded that content using the faster and cheaper jina-embeddings-v2-small-en model, ran UMAP dimensionality reduction to render a 2D map and did a whole lot of follow-on work to identify topic areas and make the map look good.

That's not even half the project - Wilson built several interactive features on top of the resulting data, and experimented with custom rendering techniques on top of canvas to get everything to render quickly.

There's so much in here, and both the code and data (multiple GBs of arrow files) are available if you want to dig in and try some of this out for yourself.

In the Hacker News comments Wilson shares that the total cost of the project was a couple of hundred dollars.

One tiny detail I particularly enjoyed - unrelated to the embeddings - was this trick for testing which edge location is closest to a user using JavaScript:

const edge = await Promise.race(

EDGES.map(async (edge) => {

// Run a few times to avoid potential cold start biases.

for (let i = 0; i < 3; i++) {

await fetch(`https://${edge}.edge-hndr.wilsonl.in/healthz`);

}

return edge;

}),

);

Link 2024-05-11 Ham radio general exam question pool as JSON:

I scraped a pass of my Ham radio general exam this morning. One of the tools I used to help me pass was a Datasette instance with all 429 questions from the official question pool. I've published that raw data as JSON on GitHub, which I converted from the official question pool document using an Observable notebook.

Relevant TIL: How I studied for my Ham radio general exam.

TIL 2024-05-11 How I studied for my Ham radio general exam:

I scraped a pass on my Ham radio general exam today, on the second attempt (you can retake on the same day for an extra $15, thankfully). …

Link 2024-05-12 “Link In Bio” is a slow knife:

Anil Dash writing in 2019 about how Instagram's "link in bio" thing (where users cannot post links to things in Instagram posts or comments, just a single link field in their bio) is harmful for linking on the web.

Today it's even worse. TikTok has the same culture, and LinkedIn and Twitter both algorithmically de-boost anything with a URL in it, encouraging users to share screenshots (often unsourced) rather than linking to content and reducing their distribution.

It's gross.

Link 2024-05-12 About ARDC (Amateur Radio Digital Communications):

In ham radio adjacent news, here's a foundation that it's worth knowing about:

ARDC makes grants to projects and organizations that are experimenting with new ways to advance both amateur radio and digital communication science.

In 1981 they were issued the entire 44.x.x.x block of IP addresses - 16 million in total. In 2019 they sold a quarter of those IPs to Amazon for about $100 million, providing them with a very healthy endowment from which they can run their grants program!

Link 2024-05-12 Parsing PNG images in Mojo:

It's still very early days for Mojo, the new systems programming language from Chris Lattner that imitates large portions of Python and can execute Python code directly via a compatibility layer.

Ferdinand Schenck reports here on building a PNG decoding routine in Mojo, with a detailed dive into both the PNG spec and the current state of the Mojo language.

Link 2024-05-13 GPUs Go Brrr:

Fascinating, detailed low-level notes on how to get the most out of NVIDIA's H100 GPUs (currently selling for around $40,000 a piece) from the research team at Stanford who created FlashAttention, among other things.

The swizzled memory layouts are flat-out incorrectly documented, which took considerable time for us to figure out.

Quote 2024-05-13

I’m no developer, but I got the AI part working in about an hour.

What took longer was the other stuff: identifying the problem, designing and building the UI, setting up the templating, routes and data architecture.

It reminded me that, in order to capitalise on the potential of AI technologies, we need to really invest in the other stuff too, especially data infrastructure.

It would be ironic, and a huge shame, if AI hype sucked all the investment out of those things.

For slom, I propose ‘spoop’ 💩