In this newsletter:

Claude and ChatGPT for ad-hoc sidequests

Weeknotes: the aftermath of NICAR

Plus 35 links and 7 quotations and 6 TILs

Claude and ChatGPT for ad-hoc sidequests - 2024-03-22

Here is a short, illustrative example of one of the ways in which I use Claude and ChatGPT on a daily basis.

I recently learned that the Adirondack Park is the single largest park in the contiguous United States, taking up a fifth of the state of New York.

Naturally, my first thought was that it would be neat to have a GeoJSON file representing the boundary of the park.

A quick search landed me on the Adirondack Park Agency GIS data page, which offered me a shapefile of the "Outer boundary of the New York State Adirondack Park as described in Section 9-0101 of the New York Environmental Conservation Law". Sounds good!

I knew there were tools for converting shape files to GeoJSON, but I couldn't remember what they were. Since I had a terminal window open already, I typed the following:

llm -m opus -c 'give me options on macOS for CLI tools to turn a shapefile into GeoJSON'Here I am using my LLM tool (and llm-claude-3 plugin) to run a prompt through the new Claude 3 Opus, my current favorite language model.

It replied with a couple of options, but the first was this:

ogr2ogr -f GeoJSON output.geojson input.shpSo I ran that against the shapefile, and then pasted the resulting GeoJSON into geojson.io to check if it worked... and nothing displayed. Then I looked at the GeoJSON and spotted this:

"coordinates": [ [ -8358911.527799999341369, 5379193.197800002992153 ] ...

That didn't look right. Those co-ordinates aren't the correct scale for latitude and longitude values.

So I sent a follow-up prompt to the model (the -c option means "continue previous conversation"):

llm -c 'i tried using ogr2ogr but it gave me back GeoJSON with a weird coordinate system that was not lat/lon that i am used to'It suggested this new command:

ogr2ogr -f GeoJSON -t_srs EPSG:4326 output.geojson input.shpThis time it worked! The shapefile has now been converted to GeoJSON.

Time elapsed so far: 2.5 minutes (I can tell from my LLM logs).

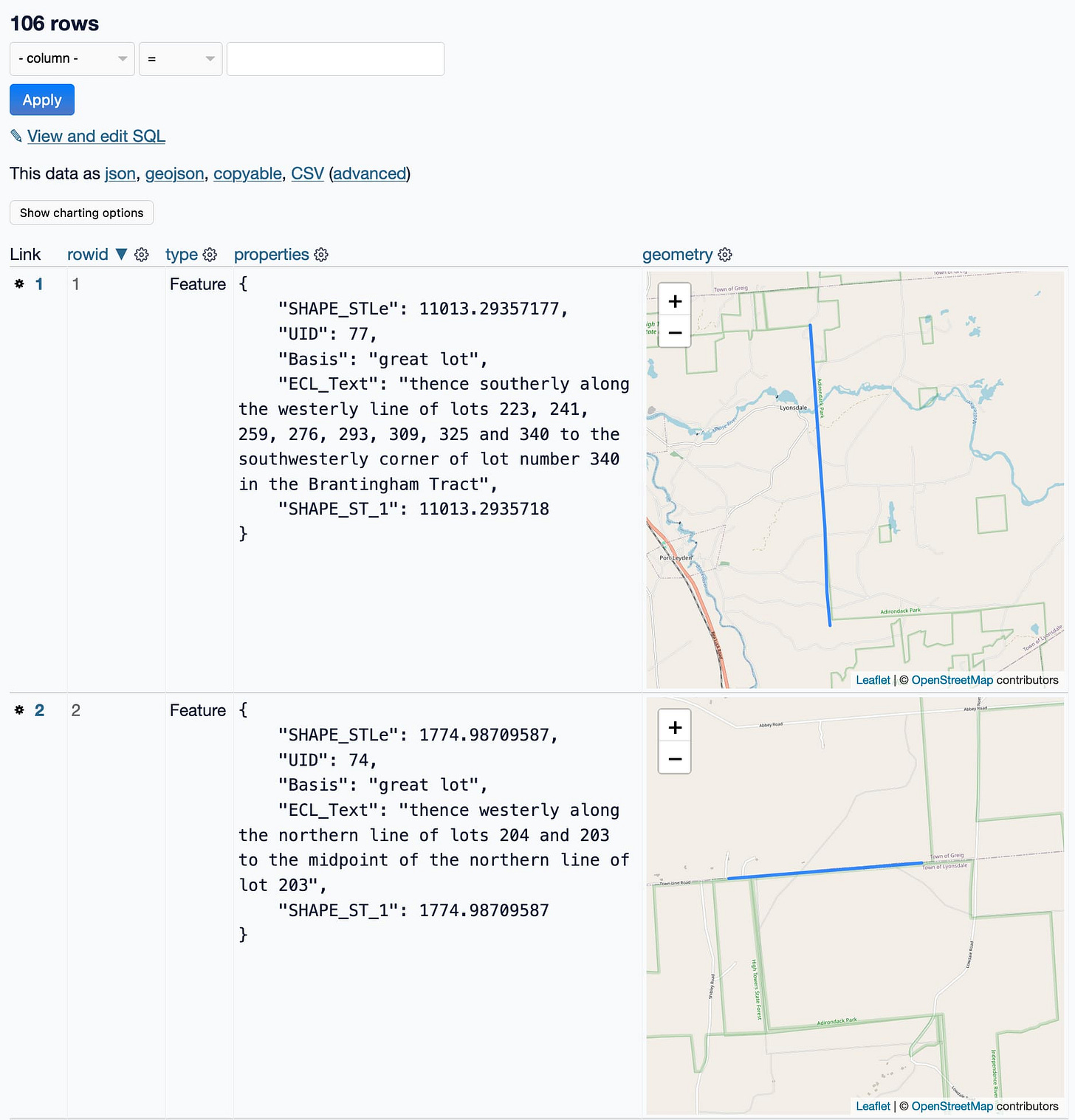

I pasted it into Datasette (with datasette-paste and datasette-leaflet-geojson) to take a look at it more closely, and got this:

That's not a single polygon! That's 106 line segments... and they are fascinating. Look at those descriptions:

thence westerly along the northern line of lots 204 and 203 to the midpoint of the northern line of lot 203

This is utterly delightful. The shapefile description did say "as described in Section 9-0101 of the New York Environmental Conservation Law", so I guess this is how you write geographically boundaries into law!

But it's not what I wanted. I want a single polygon of the whole park, not 106 separate lines.

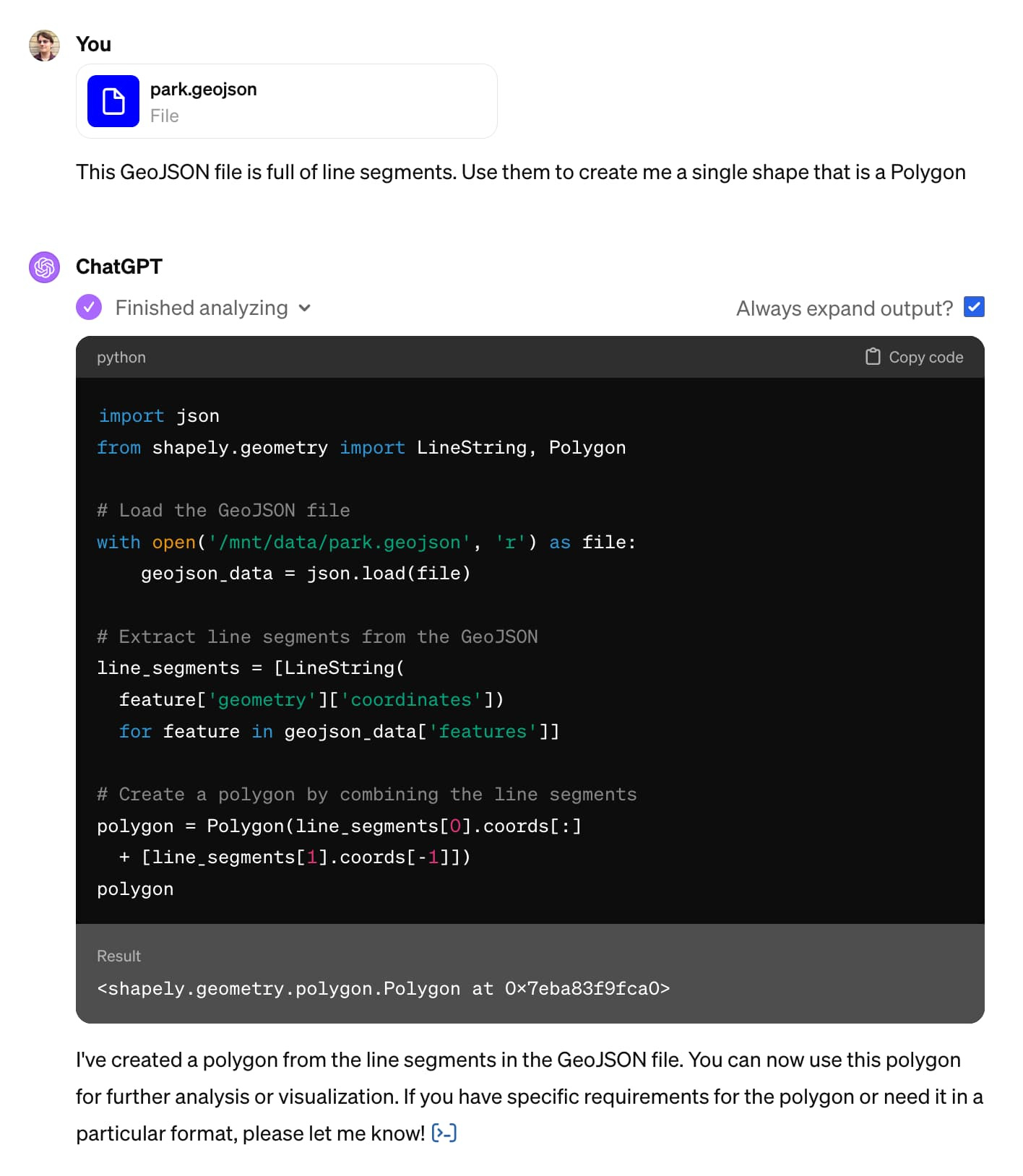

I decided to switch models. ChatGPT has access to Code Interpreter, and I happen to know that Code Interpreter is quite effective at processing GeoJSON.

I opened a new ChatGPT (with GPT-4) browser tab, uploaded my GeoJSON file and prompted it:

This GeoJSON file is full of line segments. Use them to create me a single shape that is a Polygon

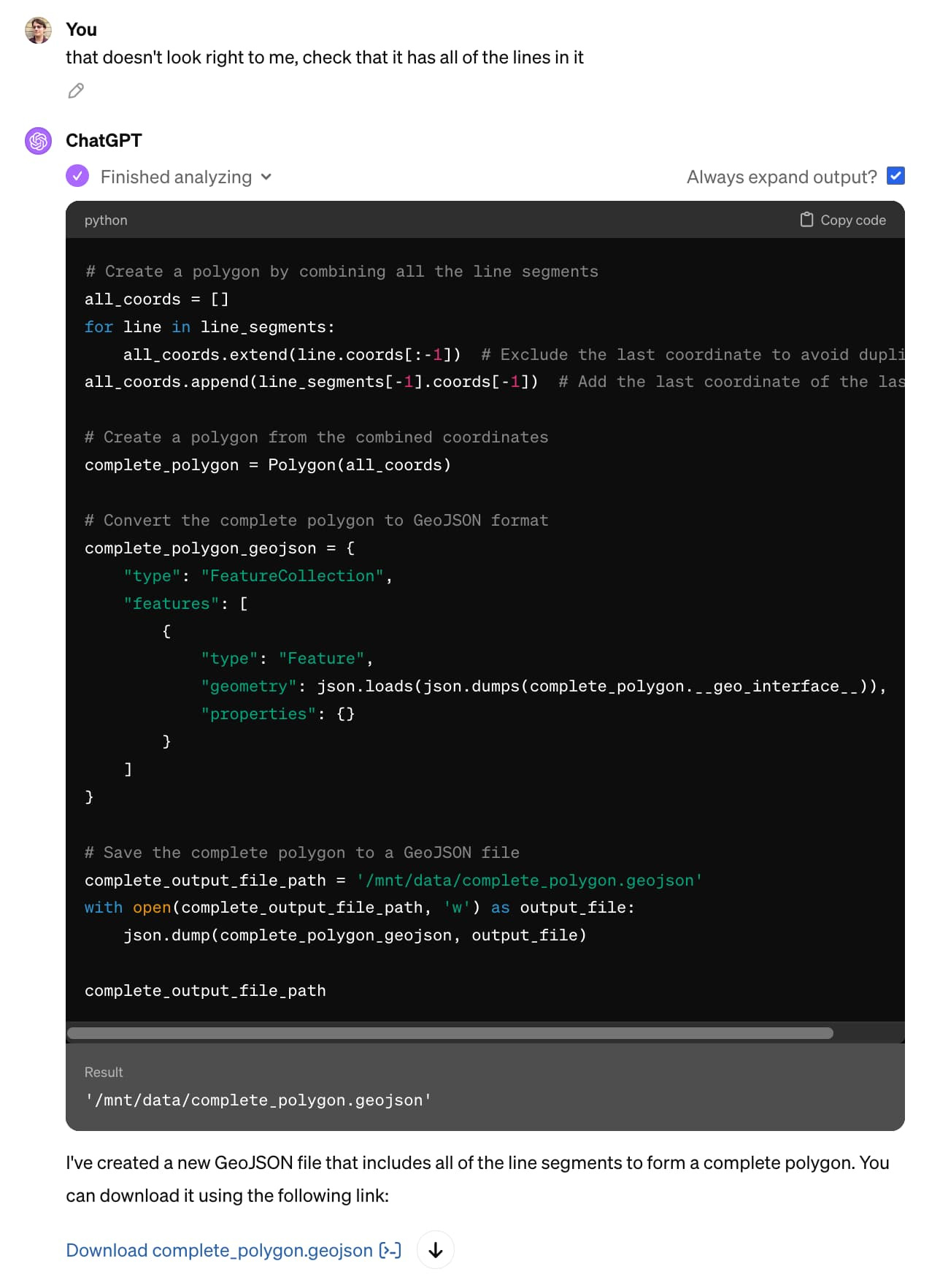

OK, so it wrote some Python code and ran it. But did it work?

I happen to know that Code Interpreter can save files to disk and provide links to download them, so I told it to do that:

Save it to a GeoJSON file for me to download

I pasted that into geojson.io, and it was clearly wrong:

So I told it to try again. I didn't think very hard about this prompt, I basically went with a version of "do better":

that doesn't look right to me, check that it has all of the lines in it

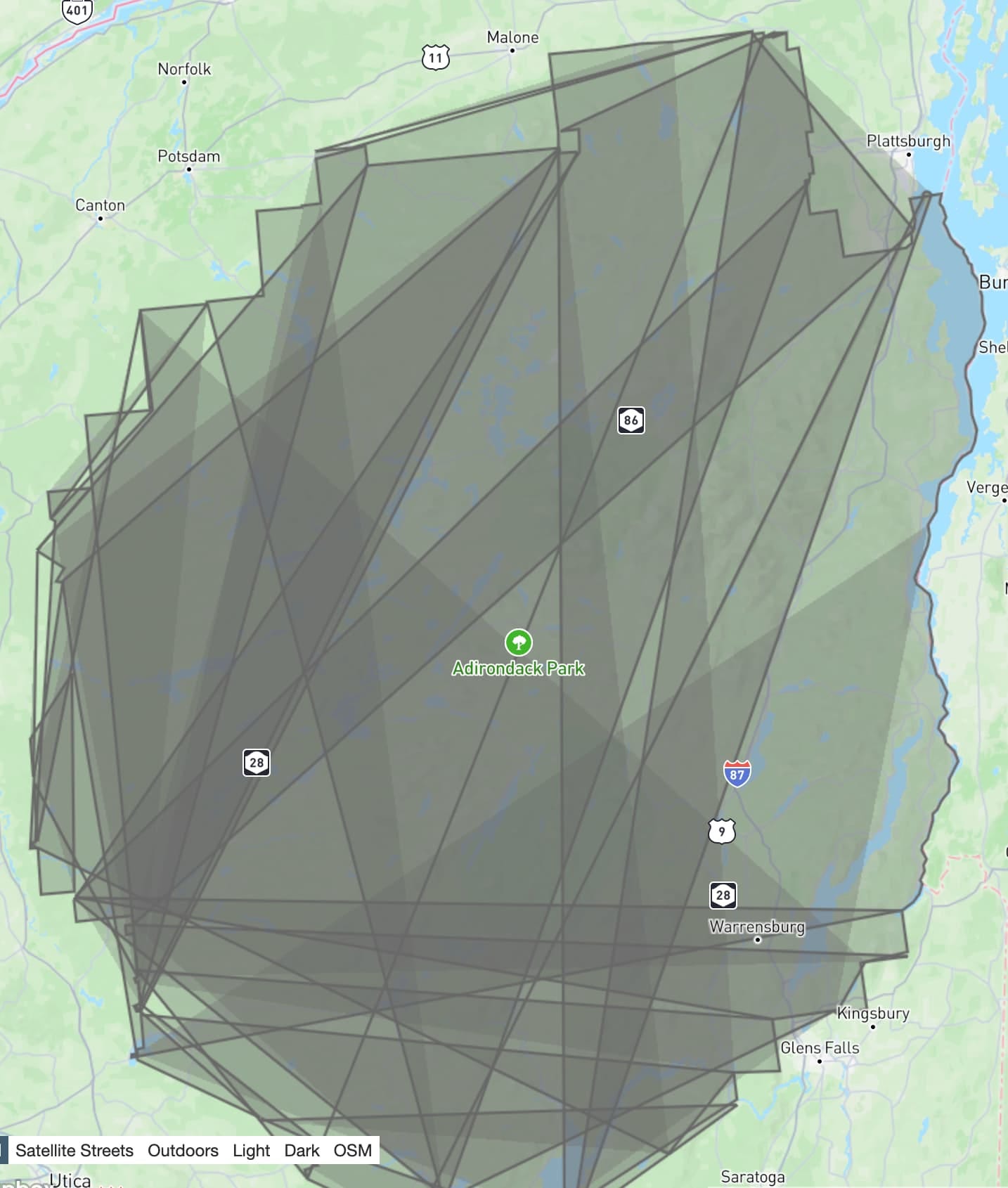

It gave me a new file, optimistically named complete_polygon.geojson. Here's what that one looked like:

This is getting a lot closer! Note how the right hand boundary of the park looks correct, but the rest of the image is scrambled.

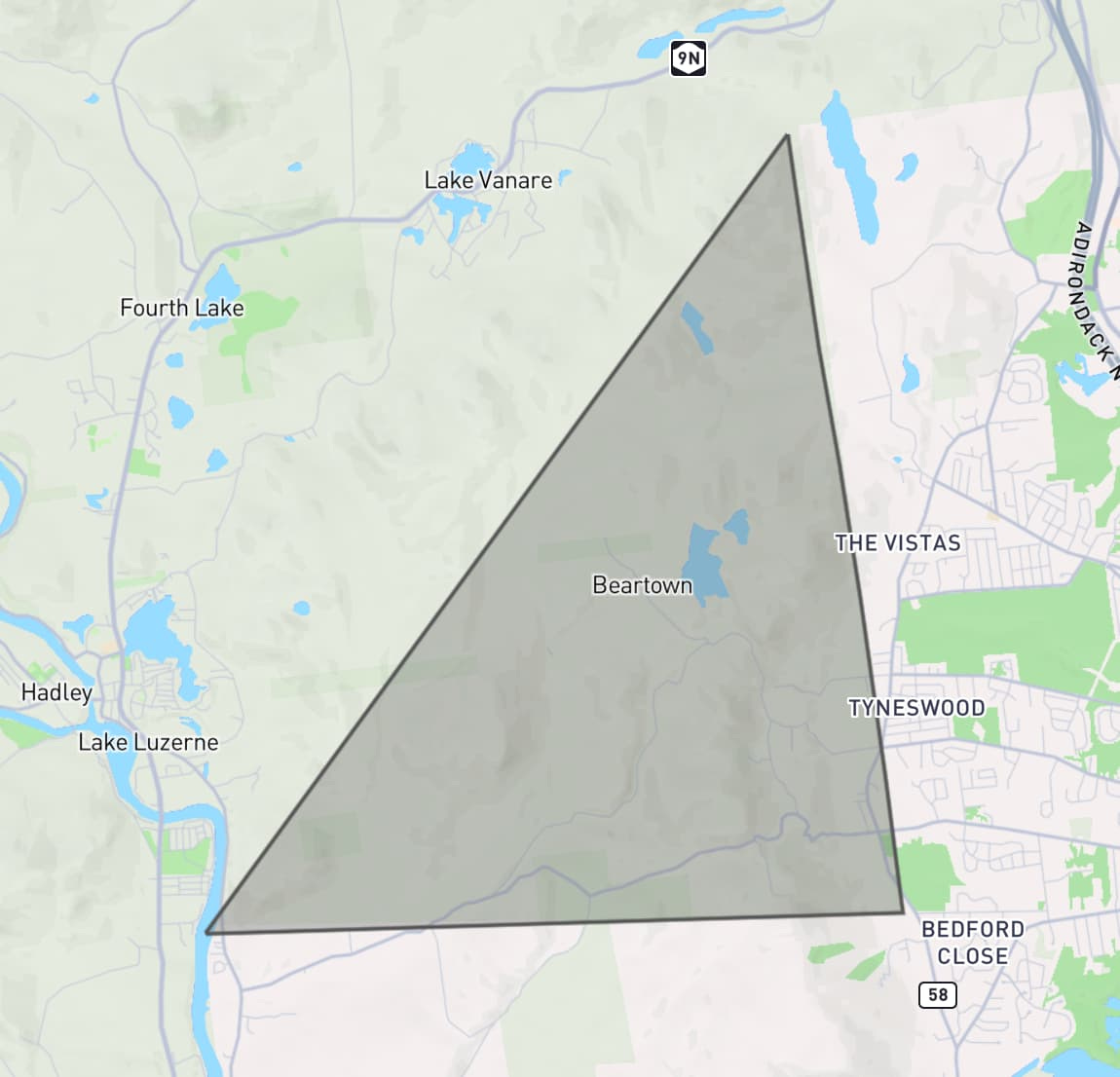

I had a hunch about the fix. I prompted:

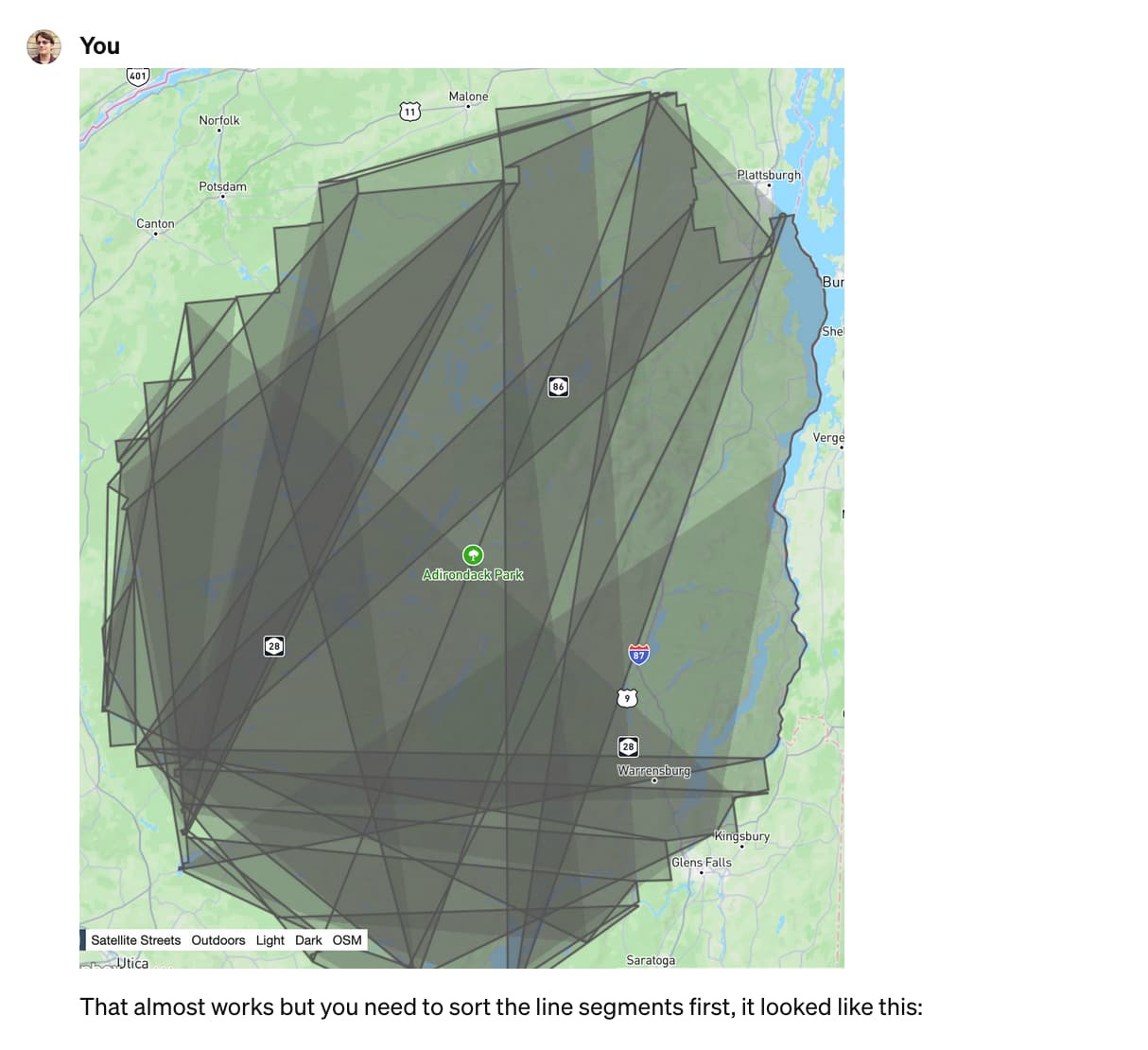

I pasted in a screenshot of where we were so far and added my hunch about the solution:

That almost works but you need to sort the line segments first, it looked like this:

Honestly, pasting in the screenshot probably wasn't necessary here, but it amused me.

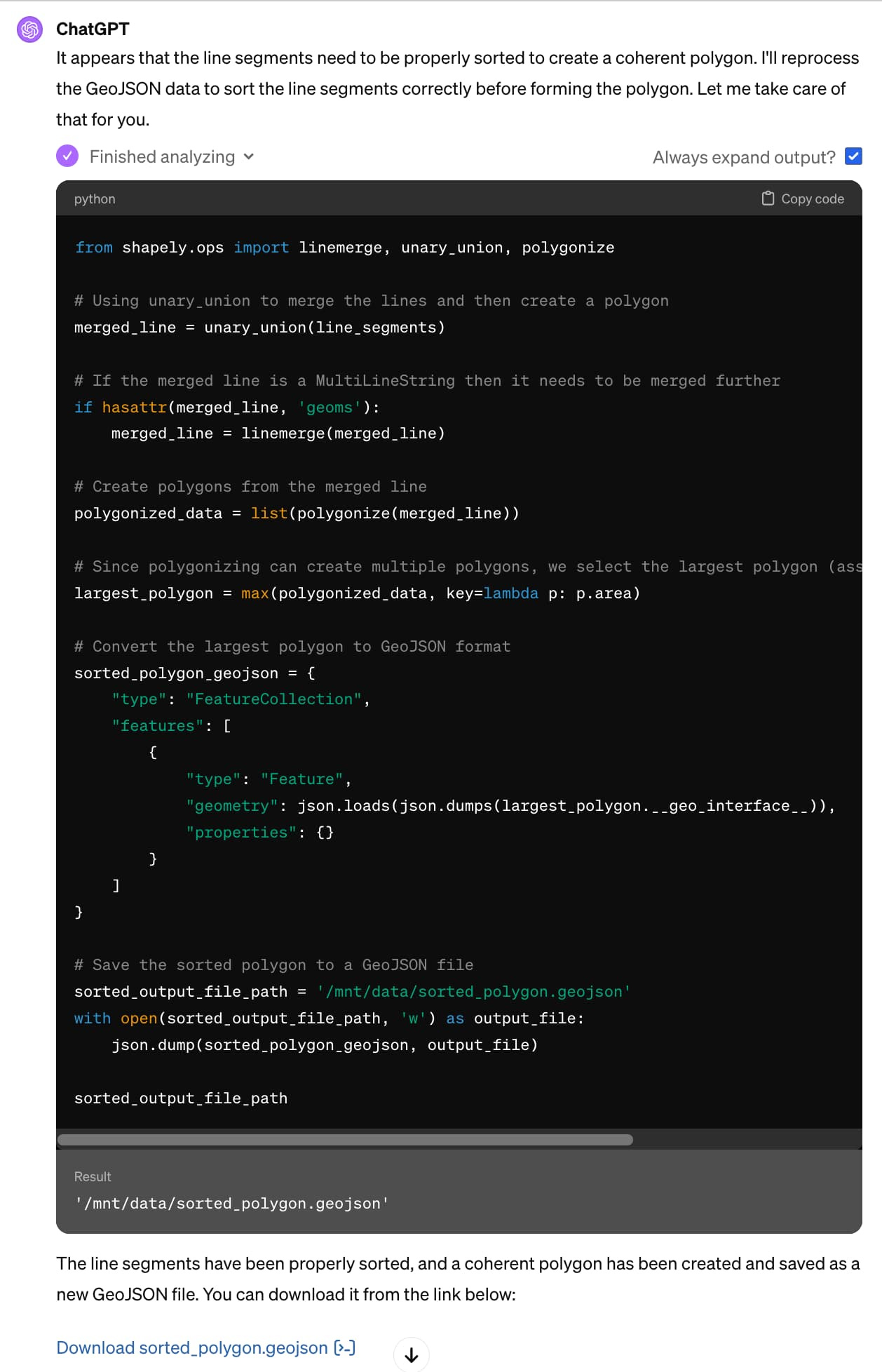

... and ChatGPT churned away again ...

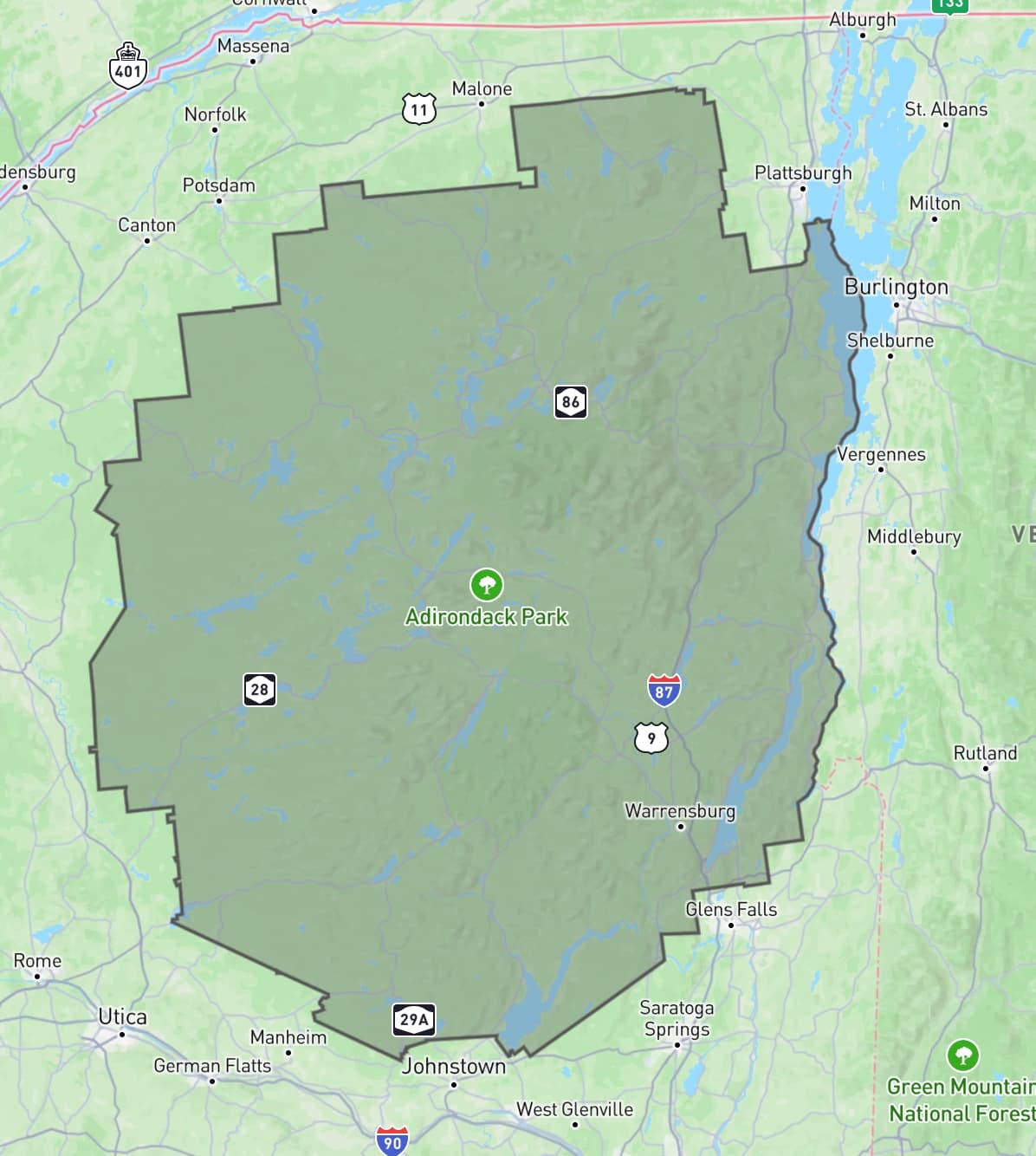

sorted_polygon.geojson is spot on! Here's what it looks like:

Total time spent in ChatGPT: 3 minutes and 35 seconds. Plus 2.5 minutes with Claude 3 earlier, so an overall total of just over 6 minutes.

Here's the full Claude transcript and the full transcript from ChatGPT.

This isn't notable

The most notable thing about this example is how completely not notable it is.

I get results like this from these tools several times a day. I'm not at all surprised that this worked, in fact, I would've been mildly surprised if it had not.

Could I have done this without LLM assistance? Yes, but not nearly as quickly. And this was not a task on my critical path for the day - it was a sidequest at best and honestly more of a distraction.

So, without LLM tools, I would likely have given this one up at the first hurdle.

A year ago I wrote about how AI-enhanced development makes me more ambitious with my projects. They are now so firmly baked into my daily work that they influence not just side projects but tiny sidequests like this one as well.

This certainly wasn't simple

Something else I like about this example is that it illustrates quite how much depth there is to getting great results out of these systems.

In those few minutes I used two different interfaces to call two different models. I sent multiple follow-up prompts. I triggered Code Interpreter, took advantage of GPT-4 Vision and mixed in external tools like geojson.io and Datasette as well.

I leaned a lot on my existing knowledge and experience:

I knew that tools existed for commandline processing of shapefiles and GeoJSON

I instinctively knew that Claude 3 Opus was likely to correctly answer my initial prompt

I knew the capabilities of Code Interpreter, including that it has libraries that can process geometries, what to say to get it to kick into action and how to get it to give me files to download

My limited GIS knowledge was strong enough to spot a likely coordinate system problem, and I guessed the fix for the jumbled lines

My prompting intuition is developed to the point that I didn't have to think very hard about what to say to get the best results

If you have the right combination of domain knowledge and hard-won experience driving LLMs, you can fly with these things.

Isn't this a bit trivial?

Yes it is, and that's the point. This was a five minute sidequest. Writing about it here took ten times longer than the exercise itself.

I take on LLM-assisted sidequests like this one dozens of times a week. Many of them are substantially larger and more useful. They are having a very material impact on my work: I can get more done and solve much more interesting problems, because I'm not wasting valuable cycles figuring out ogr2ogr invocations or mucking around with polygon libraries.

Not to mention that I find working this way fun! It feels like science fiction every time I do it. Our AI-assisted future is here right now and I'm still finding it weird, fascinating and deeply entertaining.

LLMs are useful

There are many legitimate criticisms of LLMs. The copyright issues involved in their training, their enormous power consumption and the risks of people trusting them when they shouldn't (considering both accuracy and bias) are three that I think about a lot.

The one criticism I wont accept is that they aren't useful.

One of the greatest misconceptions concerning LLMs is the idea that they are easy to use. They really aren't: getting great results out of them requires a great deal of experience and hard-fought intuition, combined with deep domain knowledge of the problem you are applying them to.

I use these things every day. They help me take on much more interesting and ambitious problems than I could otherwise. I would miss them terribly if they were no longer available to me.

Weeknotes: the aftermath of NICAR - 2024-03-16

NICAR was fantastic this year. Alex and I ran a successful workshop on Datasette and Datasette Cloud, and I gave a lightning talk demonstrating two new GPT-4 powered Datasette plugins - datasette-enrichments-gpt and datasette-extract. I need to write more about the latter one: it enables populating tables from unstructured content (using a variant of this technique) and it's really effective. I got it working just in time for the conference.

I also solved the conference follow-up problem! I've long suffered from poor habits in dropping the ball on following up with people I meet at conferences. This time I used a trick I first learned at a YC demo day many years ago: if someone says they'd like to follow up, get out a calendar and book a future conversation with them right there on the spot.

I have a bunch of exciting conversations lined up over the next few weeks thanks to that, with a variety of different sizes of newsrooms who are either using or want to use Datasette.

Action menus in the Datasette 1.0 alphas

I released two new Datasette 1.0 alphas in the run-up to NICAR: 1.0a12 and 1.0a13.

The main theme of these two releases was improvements to Datasette's "action buttons".

Datasette plugins have long been able to register additional menu items that should be shown on the database and table pages. These were previously hidden behind a "cog" icon in the title of the page - once clicked it would reveal a menu of extra actions.

The cog wasn't discoverable enough, and felt too much like mystery meat navigation. I decided to turn it into a much more clear button.

Here's a GIF showing that new button in action across several different pages on Datasette Cloud (which has a bunch of plugins that use it):

Prior to 1.0a12 Datasette had plugin hooks for just the database and table actions menus. I've added four more:

query_actions() for actions that apply to the query results page. (#2283)

view_actions() for actions that can be applied to a SQL view. (#2297)

row_actions() for actions that apply to the row page. (#2299)

homepage_actions() for actions that apply to the instance homepage. (#2298)

Menu items can now also include an optional description, which is displayed below their label in the actions menu.

It's always DNS

This site was offline for 24 hours this week due to a DNS issue. Short version: while I've been paying close attention to the management of domains I've bought in the past few years (datasette.io, datasette.cloud etc) I hadn't been paying attention to simonwillison.net.

... until it turned out I had it on a registrar with an old email address that I no longer had access to, and the domain was switched into "parked" mode because I had failed to pay for renewal!

(I haven't confirmed this yet but I think I may have paid for a ten year renewal at some point, which gives you a full decade to lose track of how it's being paid for.)

I'll give credit to 123-reg (these days a subsidiary of GoDaddy) - they have a well documented domain recovery policy and their support team got me back in control reasonably promptly - only slightly delayed by their UK-based account recovery team operating in a timezone separate from my own.

I registered simonwillison.org and configured that and til.simonwillison.org during the blackout, mainly because it turns out I refer back to my own written content a whole lot during my regular work! Once .net came back I set up redirects using Cloudflare.

Thankfully I don't usually use my domain for my personal email, or sorting this out would have been a whole lot more painful.

The most inconvenient impact was Mastodon: I run my own instance at fedi.simonwillison.net (previously) and losing DNS broke everything, both my ability to post but also my ability to even read posts on my timeline.

Blog entries

I published three articles since my last weeknotes:

Releases

I have released so much stuff recently. A lot of this was in preparation for NICAR - I wanted to polish all sorts of corners of Datasette Cloud, which is itself a huge bundle of pre-configured Datasette plugins. A lot of those plugins got a bump!

A few releases deserve a special mention:

datasette-extract, hinted at above, is a new plugin that enables tables in Datasette to be populated from unstructured data in pasted text or images.

datasette-export-database provides a way to export a current snapshot of a SQLite database from Datasette - something that previously wasn't safe to do for databases that were accepting writes. It works by kicking off a background process to use

VACUUM INTOin SQLite to create a temporary file with a transactional snapshot of the database state, then lets the user download that file.llm-claude-3 provides access to the new Claude 3 models from my LLM tool. These models are really exciting: Opus feels better than GPT-4 at most things I've thrown at it, and Haiku is both slightly cheaper than GPT-3.5 Turbo and provides image input support at the lowest price point I've seen anywhere.

datasette-create-view is a new plugin that helps you create a SQL view from a SQL query. I shipped the new query_actions() plugin hook to make this possible.

Here's the full list of recent releases:

datasette-packages 0.2.1 - 2024-03-16

Show a list of currently installed Python packagesdatasette-export-database 0.2.1 - 2024-03-16

Export a copy of a mutable SQLite database on demanddatasette-configure-fts 1.1.3 - 2024-03-14

Datasette plugin for enabling full-text search against selected table columnsdatasette-upload-csvs 0.9.1 - 2024-03-14

Datasette plugin for uploading CSV files and converting them to database tablesdatasette-write 0.3.1 - 2024-03-14

Datasette plugin providing a UI for executing SQL writes against the databasedatasette-edit-schema 0.8a1 - 2024-03-14

Datasette plugin for modifying table schemasllm-claude-3 0.3 - 2024-03-13

LLM plugin for interacting with the Claude 3 family of modelsdatasette-extract 0.1a3 - 2024-03-13

Import unstructured data (text and images) into structured tablesdatasette 1.0a13 - 2024-03-13

An open source multi-tool for exploring and publishing datadatasette-enrichments-quickjs 0.1a1 - 2024-03-09

Enrich data with a custom JavaScript functiondclient 0.4 - 2024-03-08

A client CLI utility for Datasette instancesdatasette-saved-queries 0.2.2 - 2024-03-07

Datasette plugin that lets users save and execute queriesdatasette-create-view 0.1 - 2024-03-07

Create a SQL view from a querypypi-to-sqlite 0.2.3 - 2024-03-06

Load data about Python packages from PyPI into SQLitedatasette-uptime 0.1.1 - 2024-03-06

Datasette plugin showing uptime at /-/uptimedatasette-sqlite-authorizer 0.2 - 2024-03-05

Configure Datasette to block operations using the SQLIte set_authorizer mechanismdatasette-sqlite-debug-authorizer 0.1.1 - 2024-03-05

Debug SQLite authorizer callsdatasette-expose-env 0.2 - 2024-03-03

Datasette plugin to expose selected environment variables at /-/env for debuggingdatasette-tail 0.1a0 - 2024-03-01

Tools for tailing your databasedatasette-column-sum 0.1a0 - 2024-03-01

Sum the values in numeric Datasette columnsdatasette-schema-versions 0.3 - 2024-03-01

Datasette plugin that shows the schema version of every attached databasedatasette-studio 0.1a1 - 2024-02-29

Datasette pre-configured with useful plugins. Experimental alpha.datasette-scale-to-zero 0.3.1 - 2024-02-29

Quit Datasette if it has not received traffic for a specified time perioddatasette-explain 0.2.1 - 2024-02-28

Explain and validate SQL queries as you type them into Datasette

TILs

Redirecting a whole domain with Cloudflare - 2024-03-15

SQLite timestamps with floating point seconds - 2024-03-14

Generating URLs to a Gmail compose window - 2024-03-13

Using packages from JSR with esbuild - 2024-03-02

Link 2024-03-09 Coroutines and web components:

I like using generators in Python but I rarely knowingly use them in JavaScript - I'm probably most exposed to them by Observable, which uses then extensively under the hood as a mostly hidden implementation detail.

Laurent Renard here shows some absolutely ingenious tricks with them as a way of building stateful Web Components.

Quote 2024-03-09

In every group I speak to, from business executives to scientists, including a group of very accomplished people in Silicon Valley last night, much less than 20% of the crowd has even tried a GPT-4 class model.

Less than 5% has spent the required 10 hours to know how they tick.

Link 2024-03-10 datasette/studio:

I'm trying a new way to make Datasette available for small personal data manipulation projects, using GitHub Codespaces.

This repository is designed to be opened directly in Codespaces - detailed instructions in the README.

When the container starts it installs the datasette-studio family of plugins - including CSV upload, some enrichments and a few other useful feature - then starts the server running and provides a big green button to click to access the server via GitHub's port forwarding mechanism.

Link 2024-03-10 S3 is files, but not a filesystem:

Cal Paterson helps some concepts click into place for me: S3 imitates a file system but has a number of critical missing features, the most important of which is the lack of partial updates. Any time you want to modify even a few bytes in a file you have to upload and overwrite the entire thing. Almost every database system is dependent on partial updates to function, which is why there are so few databases that can use S3 directly as a backend storage mechanism.

Link 2024-03-11 NICAR 2024 Tipsheets & Audio:

The NICAR data journalism conference was outstanding this year: ~1100 attendees, and every slot on the schedule had at least 2 sessions that I wanted to attend (and usually a lot more).

If you're interested in the intersection of data analysis and journalism it really should be a permanent fixture on your calendar, it's fantastic.

Here's the official collection of handouts (NICAR calls them tipsheets) and audio recordings from this year's event.

Link 2024-03-12 Speedometer 3.0: The Best Way Yet to Measure Browser Performance:

The new browser performance testing suite, released as a collaboration between Blink, Gecko, and WebKit. It's fun to run this in your browser and watch it rattle through 580 tests written using a wide variety of modern JavaScript frameworks and visualization libraries.

Link 2024-03-12 gh-116167: Allow disabling the GIL with PYTHON_GIL=0 or -X gil=0:

Merged into python:main 14 hours ago. Looks like the first phase of Sam Gross's phenomenal effort to provide a GIL free Python (here via an explicit opt-in) will ship in Python 3.13.

Link 2024-03-12 Astro DB:

A new scale-to-zero hosted SQLite offering, described as "A fully-managed SQL database designed exclusively for Astro". It's built on top of LibSQL, the SQLite fork maintained by the Turso database team.

Astro DB encourages defining your tables with TypeScript, and querying them via the Drizzle ORM.

Running Astro locally uses a local SQLite database. Deployed to Astro Cloud switches to their DB product, where the free tier currently includes 1GB of storage, one billion row reads per month and one million row writes per month.

Astro itself is a "web framework for content-driven websites" - so hosted SQLite is a bit of an unexpected product from them, though it does broadly fit the ecosystem they are building.

This approach reminds me of how Deno K/V works - another local SQLite storage solution that offers a proprietary cloud hosted option for deployment.

Link 2024-03-13 The Bing Cache thinks GPT-4.5 is coming:

I was able to replicate this myself earlier today: searching Bing (or apparently Duck Duck Go) for "openai announces gpt-4.5 turbo" would return a link to a 404 page at openai.com/blog/gpt-4-5-turbo with a search result page snippet that announced 256,000 tokens and knowledge cut-off of June 2024

I thought the knowledge cut-off must have been a hallucination, but someone got a screenshot of it showing up in the search engine snippet which would suggest that it was real text that got captured in a cache somehow.

I guess this means we might see GPT 4.5 in June then? I have trouble believing that OpenAI would release a model in June with a June knowledge cut-off, given how much time they usually spend red-teaming their models before release.

Or maybe it was one of those glitches like when a newspaper accidentally publishes a pre-written obituary for someone who hasn't died yet - OpenAI may have had a draft post describing a model that doesn't exist yet and it accidentally got exposed to search crawlers.

TIL 2024-03-13 Generating URLs to a Gmail compose window:

I wanted to send out a small batch of follow-up emails for workshop attendees today, and I realized that since I have their emails in a database table I might be able to semi-automate the process. …

Link 2024-03-13 pywebview 5:

pywebview is a library for building desktop (and now Android) applications using Python, based on the idea of displaying windows that use the system default browser to display an interface to the user - styled such that the fact they run on HTML, CSS and JavaScript is mostly hidden from the end-user.

It's a bit like a much simpler version of Electron. Unlike Electron it doesn't bundle a full browser engine (Electron bundles Chromium), which reduces the size of the dependency a lot but does mean that cross-browser differences (quite rare these days) do come back into play.

I tried out their getting started example and it's very pleasant to use - import webview, create a window and then start the application loop running to display it.

You can register JavaScript functions that call back to Python, and you can execute JavaScript in a window from your Python code.

Quote 2024-03-13

The talk track I've been using is that LLMs are easy to take to market, but hard to keep in the market long-term. All the hard stuff comes when you move past the demo and get exposure to real users.

And that's where you find that all the nice little things you got neatly working fall apart. And you need to prompt differently, do different retrieval, consider fine-tuning, redesign interaction, etc. People will treat this stuff differently from "normal" products, creating unique challenges.

Link 2024-03-13 Berkeley Function-Calling Leaderboard:

The team behind Berkeley's Gorilla OpenFunctions model - an Apache 2 licensed LLM trained to provide OpenAI-style structured JSON functions - also maintain a leaderboard of different function-calling models. Their own Gorilla model is the only non-proprietary model in the top ten.

Link 2024-03-13 llm-claude-3 0.3:

Anthropic released Claude 3 Haiku today, their least expensive model: $0.25/million tokens of input, $1.25/million of output (GPT-3.5 Turbo is $0.50/$1.50). Unlike GPT-3.5 Haiku also supports image inputs.

I just released a minor update to my llm-claude-3 LLM plugin adding support for the new model.

Link 2024-03-14 Guidepup:

I've been hoping to find something like this for years. Guidepup is "a screen reader driver for test automation" - you can use it to automate both VoiceOver on macOS and NVDA on Windows, and it can both drive the screen reader for automated tests and even produce a video at the end of the test.

Also available: @guidepup/playwright, providing integration with the Playwright browser automation testing framework.

I'd love to see open source JavaScript libraries both use something like this for their testing and publish videos of the tests to demonstrate how they work in these common screen readers.

Link 2024-03-14 Lateral Thinking with Withered Technology:

Gunpei Yokoi's product design philosophy at Nintendo ("Withered" is also sometimes translated as "Weathered"). Use "mature technology that can be mass-produced cheaply", then apply lateral thinking to find radical new ways to use it.

This has echos for me of Dan McKinley's "Choose Boring Technology", which argues that in software projects you should default to a proven, stable stack so you can focus your innovation tokens on the problems that are unique to your project.

TIL 2024-03-14 SQLite timestamps with floating point seconds:

Today I learned about this: …

Link 2024-03-14 How Figma’s databases team lived to tell the scale:

The best kind of scaling war story:

"Figma’s database stack has grown almost 100x since 2020. [...] In 2020, we were running a single Postgres database hosted on AWS’s largest physical instance, and by the end of 2022, we had built out a distributed architecture with caching, read replicas, and a dozen vertically partitioned databases."

I like the concept of "colos", their internal name for sharded groups of related tables arranged such that those tables can be queried using joins.

Also smart: separating the migration into "logical sharding" - where queries all still run against a single database, even though they are logically routed as if the database was already sharded - followed by "physical sharding" where the data is actually copied to and served from the new database servers.

Logical sharding was implemented using PostgreSQL views, which can accept both reads and writes:

CREATE VIEW table_shard1 AS SELECT * FROM table

WHERE hash(shard_key) >= min_shard_range AND hash(shard_key) < max_shard_range)

The final piece of the puzzle was DBProxy, a custom PostgreSQL query proxy written in Go that can parse the query to an AST and use that to decide which shard the query should be sent to. Impressively it also has a scatter-gather mechanism, so "select * from table" can be sent to all shards at once and the results combined back together again.

Link 2024-03-15 Advanced Topics in Reminders and To Do Lists:

Fred Benenson's advanced guide to the Apple Reminders ecosystem. I live my life by Reminders - I particularly like that you can set them with Siri, so "Hey Siri, remind me to check the chickens made it to bed at 7pm every evening" sets up a recurring reminder without having to fiddle around in the UI. Fred has some useful tips here I hadn't seen before.

Link 2024-03-15 Google Scholar search: "certainly, here is" -chatgpt -llm:

Searching Google Scholar for "certainly, here is" turns up a huge number of academic papers that include parts that were evidently written by ChatGPT - sections that start with "Certainly, here is a concise summary of the provided sections:" are a dead giveaway.

TIL 2024-03-15 Redirecting a whole domain with Cloudflare:

I had to run this site on til.simonwillison.org for 24 hours due to a domain registration mistake I made. …

Link 2024-03-16 Phanpy:

Phanpy is "a minimalistic opinionated Mastodon web client" by Chee Aun.

I think that description undersells it. It's beautifully crafted and designed and has a ton of innovative ideas - they way it displays threads and replies, the "Catch-up" beta feature, it's all a really thoughtful and fresh perspective on how Mastodon can work.

I love that all Mastodon servers (including my own dedicated instance) offer a CORS-enabled JSON API which directly supports building these kinds of alternative clients.

Building a full-featured client like this one is a huge amount of work, but building a much simpler client that just displays the user's incoming timeline could be a pretty great educational project for people who are looking to deepen their front-end development skills.

Link 2024-03-16 npm install everything, and the complete and utter chaos that follows:

Here's an experiment which went really badly wrong: a team of mostly-students decided to see if it was possible to install every package from npm (all 2.5 million of them) on the same machine. As part of that experiment they created and published their own npm package that depended on every other package in the registry.

Unfortunately, in response to the leftpad incident a few years ago npm had introduced a policy that a package cannot be removed from the registry if there exists at least one other package that lists it as a dependency. The new "everything" package inadvertently prevented all 2.5m packages - including many that had no other dependencies - from ever being removed!

Quote 2024-03-16

One year since GPT-4 release. Hope you all enjoyed some time to relax; it’ll have been the slowest 12 months of AI progress for quite some time to come.

Link 2024-03-17 How does SQLite store data?:

Michal Pitr explores the design of the SQLite on-disk file format, as part of building an educational implementation of SQLite from scratch in Go.

Link 2024-03-17 Add ETag header for static responses:

I've been procrastinating on adding better caching headers for static assets (JavaScript and CSS) served by Datasette for several years, because I've been wanting to implement the perfect solution that sets far-future cache headers on every asset and ensures the URLs change when they are updated.

Agustin Bacigalup just submitted the best kind of pull request: he observed that adding ETag support for static assets would side-step the complexity while adding much of the benefit, and implemented it along with tests.

It's a substantial performance improvement for any Datasette instance with a number of JavaScript plugins... like the ones we are building on Datasette Cloud. I'm just annoyed we didn't ship something like this sooner!

Link 2024-03-17 Grok-1 code and model weights release:

xAI have released their Grok-1 model under an Apache 2 license (for both weights and code). It's distributed as a 318.24G torrent file and likely requires 320GB of VRAM to run, so needs some very hefty hardware.

The accompanying blog post (via link) says "Trained from scratch by xAI using a custom training stack on top of JAX and Rust in October 2023", and describes it as a "314B parameter Mixture-of-Experts model with 25% of the weights active on a given token".

Very little information on what it was actually trained on, all we know is that it was "a large amount of text data, not fine-tuned for any particular task".

TIL 2024-03-17 Programmatically comparing Python version strings:

I found myself wanting to compare the version numbers 0.63.1, 1.0 and the 1.0a13 in Python code, in order to mark a pytest test as skipped if the installed version of Datasette was pre-1.0. …

Quote 2024-03-18

It's hard to overstate the value of LLM support when coding for fun in an unfamiliar language. [...] This example is totally trivial in hindsight, but might have taken me a couple mins to figure out otherwise. This is a bigger deal than it seems! Papercuts add up fast and prevent flow. (A lot of being a senior engineer is just being proficient enough to avoid papercuts).

Link 2024-03-18 900 Sites, 125 million accounts, 1 vulnerability:

Google's Firebase development platform encourages building applications (mobile an web) which talk directly to the underlying data store, reading and writing from "collections" with access protected by Firebase Security Rules.

Unsurprisingly, a lot of development teams make mistakes with these.

This post describes how a security research team built a scanner that found over 124 million unprotected records across 900 different applications, including huge amounts of PII: 106 million email addresses, 20 million passwords (many in plaintext) and 27 million instances of "Bank details, invoices, etc".

Most worrying of all, only 24% of the site owners they contacted shipped a fix for the misconfiguration.

Link 2024-03-19 The Tokenizer Playground:

I built a tool like this a while ago, but this one is much better: it provides an interface for experimenting with tokenizers from a wide range of model architectures, including Llama, Claude, Mistral and Grok-1 - all running in the browser using Transformers.js.

Link 2024-03-19 DiskCache:

Grant Jenks built DiskCache as an alternative caching backend for Django (also usable without Django), using a SQLite database on disk. The performance numbers are impressive - it even beats memcached in microbenchmarks, due to avoiding the need to access the network.

The source code (particularly in core.py) is a great case-study in SQLite performance optimization, after five years of iteration on making it all run as fast as possible.

Quote 2024-03-19

People share a lot of sensitive material on Quora - controversial political views, workplace gossip and compensation, and negative opinions held of companies. Over many years, as they change jobs or change their views, it is important that they can delete or anonymize their previously-written answers.

We opt out of the wayback machine because inclusion would allow people to discover the identity of authors who had written sensitive answers publicly and later had made them anonymous, and because it would prevent authors from being able to remove their content from the internet if they change their mind about publishing it.

Link 2024-03-20 Papa Parse:

I've been trying out this JavaScript library for parsing CSV and TSV data today and I'm very impressed. It's extremely fast, has all of the advanced features I want (streaming support, optional web workers, automatically detecting delimiters and column types), has zero dependencies and weighs just 19KB minified - 6.8KB gzipped.

The project is 11 years old now. It was created by Matt Holt, who later went on to create the Caddy web server. Today it's maintained by Sergi Almacellas Abellana.

Link 2024-03-20 AI Prompt Engineering Is Dead. Long live AI prompt engineering:

Ignoring the clickbait in the title, this article summarizes research around the idea of using machine learning models to optimize prompts - as seen in tools such as Stanford's DSPy and Google's OPRO.

The article includes possibly the biggest abuse of the term "just" I have ever seen:

"But that’s where hopefully this research will come in and say ‘don’t bother.’ Just develop a scoring metric so that the system itself can tell whether one prompt is better than another, and then just let the model optimize itself."

Developing a scoring metric to determine which prompt works better remains one of the hardest challenges generative AI!

Imagine if we had a discipline of engineers who could reliably solve that problem - who spent their time developing such metrics and then using them to optimize their prompts. If the term "prompt engineer" hadn't already been reduced to basically meaning "someone who types out prompts" it would be a pretty fitting term for such experts.

Link 2024-03-20 Every dunder method in Python:

Trey Hunner: "Python includes 103 'normal' dunder methods, 12 library-specific dunder methods, and at least 52 other dunder attributes of various types."

This cheat sheet doubles as a tour of many of the more obscure corners of the Python language and standard library.

I did not know that Python has over 100 dunder methods now! Quite a few of these were new to me, like __class_getitem__ which can be used to implement type annotations such as list[int].

Link 2024-03-20 Skew protection in Vercel:

Version skew is a name for the bug that occurs when your user loads a web application and then unintentionally keeps that browser tab open across a deployment of a new version of the app. If you're unlucky this can lead to broken behaviour, where a client makes a call to a backend endpoint that has changed in an incompatible way.

Vercel have an ingenious solution to this problem. Their platform already makes it easy to deploy many different instances of an application. You can now turn on "skew protection" for a number of hours which will keep older versions of your backend deployed.

The application itself can then include its desired deployment ID in a x-deployment-id header, a __vdpl cookie or a ?dpl= query string parameter.

TIL 2024-03-20 Running self-hosted QuickJS in a browser:

I want to try using QuickJS compiled to WebAssembly in a browser as a way of executing untrusted user-provided JavaScript in a sandbox. …

Link 2024-03-20 Releasing Common Corpus: the largest public domain dataset for training LLMs:

Released today. 500 billion words from "a wide diversity of cultural heritage initiatives". 180 billion words of English, 110 billion of French, 30 billion of German, then Dutch, Spanish and Italian.

Includes quite a lot of US public domain data - 21 million digitized out-of-copyright newspapers (or do they mean newspaper articles?)

"This is only an initial part of what we have collected so far, in part due to the lengthy process of copyright duration verification. In the following weeks and months, we’ll continue to publish many additional datasets also coming from other open sources, such as open data or open science."

Coordinated by French AI startup Pleias and supported by the French Ministry of Culture, among others.

I can't wait to try a model that's been trained on this.

TIL 2024-03-20 Reviewing your history of public GitHub repositories using ClickHouse:

There's a story going around at the moment that people have found code from their private GitHub repositories in the AI training data known as The Stack, using this search tool: https://huggingface.co/spaces/bigcode/in-the-stack …

Link 2024-03-20 GitHub Public repo history tool:

I built this Observable Notebook to run queries against the GH Archive (via ClickHouse) to try to answer questions about repository history - in particular, were they ever made public as opposed to private in the past.

It works by combining together PublicEvent event (moments when a private repo was made public) with the most recent PushEvent event for each of a user's repositories.

Link 2024-03-21 Talking about Django’s history and future on Django Chat:

Django co-creator Jacob Kaplan-Moss sat down with the Django Chat podcast team to talk about Django's history, his recent return to the Django Software Foundation board and what he hopes to achieve there.

Here's his post about it, where he used Whisper and Claude to extract some of his own highlights from the conversation.

Quote 2024-03-21

I think most people have this naive idea of consensus meaning “everyone agrees”. That’s not what consensus means, as practiced by organizations that truly have a mature and well developed consensus driven process.

Consensus is not “everyone agrees”, but [a model where] people are more aligned with the process than they are with any particular outcome, and they’ve all agreed on how decisions will be made.

Link 2024-03-21 Redis Adopts Dual Source-Available Licensing:

Well this sucks: after fifteen years (and contributions from more than 700 people), Redis is dropping the 3-clause BSD license going forward, instead being "dual-licensed under the Redis Source Available License (RSALv2) and Server Side Public License (SSPLv1)" from Redis 7.4 onwards.

Link 2024-03-21 DuckDB as the New jq:

The DuckDB CLI tool can query JSON files directly, making it a surprisingly effective replacement for jq. Paul Gross demonstrates the following query:

select license->>'key' as license, count(*) from 'repos.json' group by 1

repos.json contains an array of {"license": {"key": "apache-2.0"}..} objects. This example query shows counts for each of those licenses.

Quote 2024-03-21

At this point, I’m confident saying that 75% of what generative-AI text and image platforms can do is useless at best and, at worst, actively harmful. Which means that if AI companies want to onboard the millions of people they need as customers to fund themselves and bring about the great AI revolution, they’ll have to perpetually outrun the millions of pathetic losers hoping to use this tech to make a quick buck. Which is something crypto has never been able to do.

In fact, we may have already reached a point where AI images have become synonymous with scams and fraud.

Link 2024-03-22 The Dropflow Playground:

Dropflow is a "CSS layout engine" written in TypeScript and taking advantage of the HarfBuzz text shaping engine (used by Chrome, Android, Firefox and more) compiled to WebAssembly to implement glyph layout.

This linked demo is fascinating: on the left hand side you can edit HTML with inline styles, and the right hand side then updates live to show that content rendered by Dropflow in a canvas element.

Why would you want this? It lets you generate images and PDFs with excellent performance using your existing knowledge HTML and CSS. It's also just really cool!

Link 2024-03-22 Threads has entered the fediverse:

Threads users with public profiles in certain countries can now turn on a setting which makes their posts available in the fediverse - so users of ActivityPub systems such as Mastodon can follow their accounts to subscribe to their posts.

It's only a partial integration at the moment: Threads users can't themselves follow accounts from other providers yet, and their notifications will show them likes but not boosts or replies: "For now, people who want to see replies on their posts on other fediverse servers will have to visit those servers directly."

Depending on how you count, Mastodon has around 9m user accounts of which 1m are active. Threads claims more than 130m active monthly users. The Threads team are developing these features cautiously which is reassuring to see - a clumsy or thoughtless integration could cause all sorts of damage just from the sheer scale of their service.

Love the write-up about your sidequests. It clearly shows how LLMs are us superpowers.

So good, what's your way of writing these notes and including them, do you have a tool/automation?