Accessing Llama 2 from the command-line with the llm-replicate plugin

LLama 2 is a really big deal

In this newsletter:

Accessing Llama 2 from the command-line with the llm-replicate plugin

Weeknotes: Self-hosted language models with LLM plugins, a new Datasette tutorial, a dozen package releases, a dozen TILs

Plus 2 links and 2 quotations and 2 TILs

Accessing Llama 2 from the command-line with the llm-replicate plugin - 2023-07-18

The big news today is Llama 2, the new openly licensed Large Language Model from Meta AI. It's a really big deal:

Llama 2 is available for free for research and commercial use.

(swyy spotted that it was rebranded from LLaMA 2 to Llama 2 a few hours ago.)

Facebook's original LLaMA model, released in February, kicked off a seismic wave of innovation in the world of open source LLMs - from fine-tuned variants to from-scratch recreations. I wrote about the impact this was having a few weeks after its original release in Large language models are having their Stable Diffusion moment. If anything I under-estimated how significant this was going to be.

There was just one catch: you couldn't use it for commercial purposes.

That's changed with Llama 2!

It does still have a couple of interesting limitations, emphasis mine:

You will not use the Llama Materials or any output or results of the Llama Materials to improve any other large language model (excluding Llama 2 or derivative works thereof).

If, on the Llama 2 version release date, the monthly active users of the products or services made available by or for Licensee, or Licensee’s affiliates, is greater than 700 million monthly active users in the preceding calendar month, you must request a license from Meta, which Meta may grant to you in its sole discretion[...]

This still makes it available for most commercial purposes. Let's try it out.

Accessing Llama 2 using LLM

My LLM tool provides command-line access to a wide variety of language models, both via web APIs and self-hosted on your own machine.

Last week I added plugin support for extending it to support new models. An independently released plugin can now add support for new models to the existing CLI tool.

The brand new llm-replicate plugin provides CLI access to models hosted on Replicate, and this morning a16z-infra released a16z-infra/llama13b-v2-chat which provides Replicate API access to the new Llama 2 13B chat model.

You'll need a Replicate API key.

Install LLM like this:

pip install llm(I like to use pipx.)

Or if you don't have pip setup but can use Homebrew:

brew install simonw/llm/llmRun llm --version to confirm it's installed.

Next, install the llm-replicate plugin:

llm install llm-replicateThe plugin adds a new llm replicate set of commands - see them with llm --help.

Configure your Replicate API key so the plugin can talk to the API:

llm keys set replicateEnter key: <paste key here>

Register the new a16z-infra/llama13b-v2-chat model with the plugin:

llm replicate add a16z-infra/llama13b-v2-chat \

--chat --alias llama2This tells the plugin that it's a "chat" model, which means you can have continuing conversations with it, rather than just sending single prompts.

Now we can send a prompt, using the llama2 alias we configured there:

llm -m llama2 "Ten great names for a pet pelican"

I get this:

Certainly! Here are ten great names for a pet pelican:

Peanut: A cute and playful name for a small pelican.

Puddles: A fun and silly name for a pelican that loves to splash around in water.

Pete: A classic name that suits a friendly and outgoing pelican.

Penelope: A pretty and elegant name for a female pelican.

Percy: A charming and sophisticated name for a male pelican.

Pippy: A playful and affectionate name for a pelican with a lively personality.

Pelty: A fun and quirky name for a pelican with a unique sense of style.

Pip: A sweet and endearing name for a small pelican.

Plucky: A feisty and adventurous name for a pelican with a big personality.

Poppy: A lovely and delicate name for a female pelican with a sweet disposition.

I hope these suggestions help you find the perfect name for your pet pelican!

And because it's a chat model, we can send a follow-up prompt to the same conversation:

llm -c "Five more and make them more nautical"Ahoy! Here be five more nautical names for yer pet pelican:

Seabird: A fitting name for a pelican that loves the ocean and all its wonders.

Captain Hook: A swashbuckling name for a pelican with a bold and fearless spirit.

Anchor: A sturdy and dependable name for a pelican that's always grounded and stable.

Finley: A fishy name for a pelican with a love for the sea and all its creatures.

Buoyant: A bouncy and cheerful name for a pelican with a lighthearted and carefree attitude.

Hope these suggestions float yer boat, matey!

LLM logs every prompt and response sent through it to a SQLite database. I'm using --truncate here to shorten the output to include in this post:

llm logs --truncate[

{

"id": "01h5n5b3q6s54e03wtvqe90w2g",

"model": "replicate-a16z-infra-llama13b-v2-chat",

"prompt": "Five more and make them more nautical",

"system": null,

"response": "Ahoy matey! Here be five more nautical-themed names for yer pet pelican:\n\n1. Captain Hook\n2. Anch...",

"response_json": null,

"conversation_id": "01h5n5atsqfg4bhxc53j3ymnx2",

"duration_ms": 7364,

"datetime_utc": "2023-07-18T18:48:51.999944",

"conversation_name": "Ten great names for a pet pelic\u2026",

"conversation_model": "replicate-a16z-infra-llama13b-v2-chat"

},

{

"id": "01h5n5atss6k9zsr5qs5hb2wzd",

"model": "replicate-a16z-infra-llama13b-v2-chat",

"prompt": "Ten great names for a pet pelican",

"system": null,

"response": "Sure, here are ten great names for a pet pelican:\n\n1. Pelty\n2. Peanut\n3. Puddles\n4. Nibbles\n5. Fu...",

"response_json": null,

"conversation_id": "01h5n5atsqfg4bhxc53j3ymnx2",

"duration_ms": 7873,

"datetime_utc": "2023-07-18T18:48:42.356413",

"conversation_name": "Ten great names for a pet pelic\u2026",

"conversation_model": "replicate-a16z-infra-llama13b-v2-chat"

}

]Or run llm logs path to see the path to the SQLite database file. If you have Datasette installed you can open the logs database like this:

datasette "$(llm logs path)"

Or with Datasette Desktop:

open "$(llm logs path)"

What's next

I'm really excited about Llama 2. I expect we'll start seeing all kinds of innovation on top of it as the model weights themselves start to roll out.

I'm particularly looking forward to:

The fine-tuned models. Alpaca, Vicuna et-al did amazing things with fine-tuning on top of the original LLaMA. I expect we'll see dozens of fine-tuned models over the next few weeks.

Implementation of the reAct and Toolformer patterns, which will enable Llama 2 to drive external tools in a similar way to OpenAI Functions. The Llama 2 paper mentions testing against Toolformer prompts already, so this hopefully won't be too difficult.

Hopefully this time we'll start to see a concerted effort from people to document what kind of prompts work well with Llama 2 for practical purposes, since I expect this model will be an influential piece of the ecosystem for some time to come.

Weeknotes: Self-hosted language models with LLM plugins, a new Datasette tutorial, a dozen package releases, a dozen TILs - 2023-07-16

A lot of stuff to cover from the past two and a half weeks.

LLM and self-hosted language model plugins

My biggest project was the new version of my LLM tool for interacting with Large Language Models. LLM now accepts plugins for adding alternative language models to the tool, meaning it's now applicable to more than just the OpenAI collection.

I figured out quite a few of the details of this while offline on a camping trip up in the Northern California redwoods, which forced the issue on figuring out how to work with LLMs that I could host on my own computer because I didn't have a connection to access the OpenAI APIs.

Comprehensive documentation is sorely lacking in the world of generative AI. I've decided to push back against that for LLM, so I spent a bunch of time working on an extremely comprehensive tutorial for writing a plugin that adds a new language model to the tool:

As part of researching this tutorial I finally figured out how to build a Python package using just a pyproject.toml file, with no setup.py or setup.cfg or anything else like that. I wrote that up in detail in Python packages with pyproject.toml and nothing else, and I've started using that pattern for all of my new Python packages.

LLM also now includes a Python API for interacting with models, which provides an abstraction that works the same for the OpenAI models and for other models (including self-hosted models) installed via plugins. Here's the documentation for that - it ends up looking like this:

import llm

model = llm.get_model("gpt-3.5-turbo")

model.key = 'YOUR_API_KEY_HERE'

response = model.prompt("Five surprising names for a pet pelican")

for chunk in response:

print(chunk, end="")To use another model, just swap its name in for gpt-3.5-turbo. The self-hosted models provided by the llm-gpt4all plugin work the same way:

pip install llm-gpt4allThen:

import llm

model = llm.get_model("ggml-vicuna-7b-1")

response = model.prompt("Five surprising names for a pet pelican")

# You can do this instead of looping through the chunks:

print(response.text())I've released three plugins so far:

llm-gpt4all with 17 self-hosted models from the GPT4All project.

llm-palm with Google's PaLM 2 language model, via their API.

llm-mpt30b providing the 19GB MPT-30B model, using TheBloke/mpt-30B-GGML.

I'm looking forward to someone else following the tutorial and releasing their own plugin!

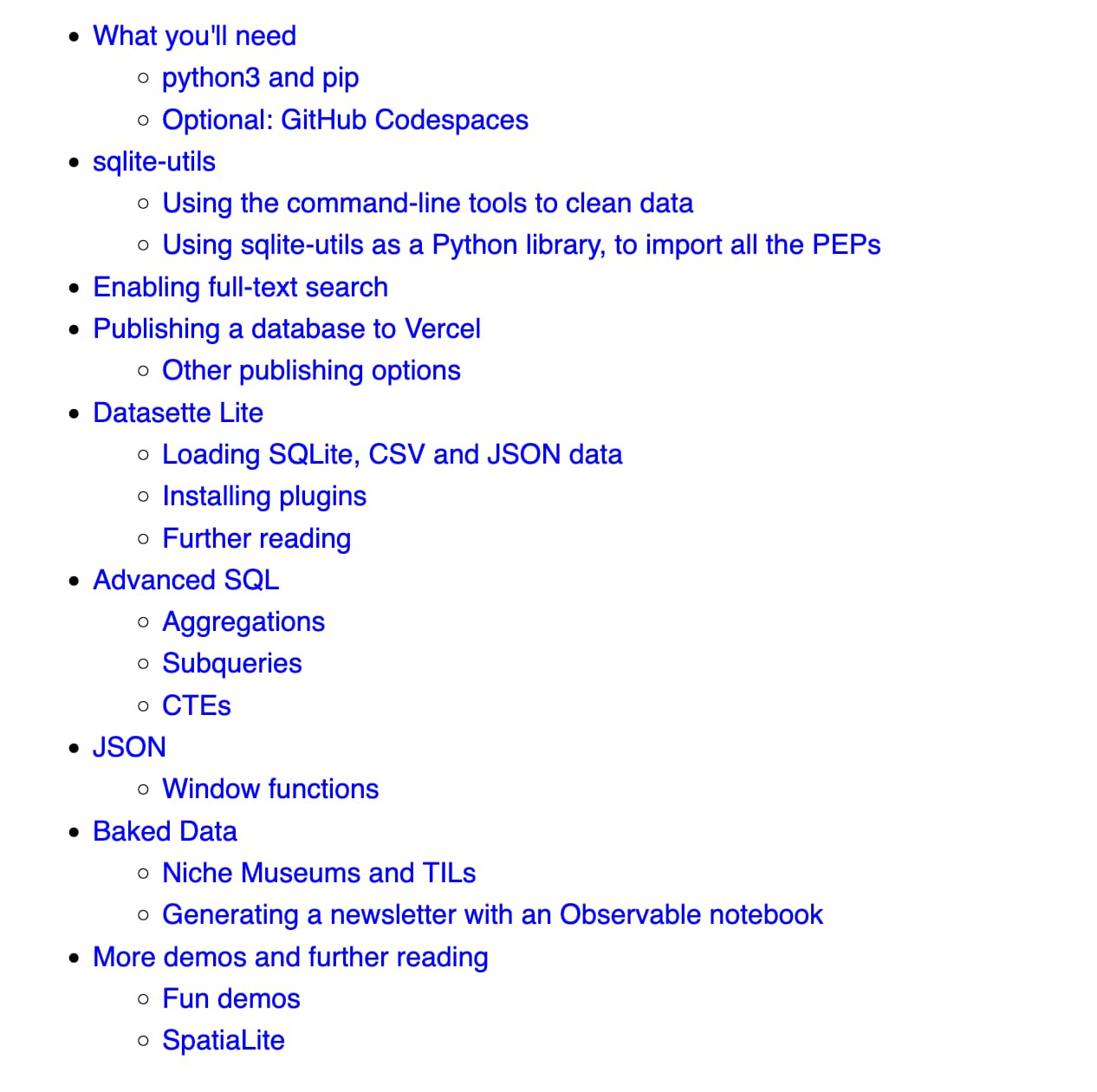

A new tutorial: Data analysis with SQLite and Python

I presented this as a 2hr45m tutorial at PyCon a few months ago. The video is now available, and I like to try to turn these kinds of things into more permanent documentation.

The Datasette website has a growing collection of tutorials, and I decided to make that the final home for this one too.

Data analysis with SQLite and Python now has the full 2hr45m video plus an improved version of the handout I used for the talk. The written material there there should also be valuable for people who don't want to spend nearly three hours watching the video!

As part of putting that page together I solved a problem I've been wanting to figure out for a long time: I figured out a way to build a custom Jinja block tag that looks like this:

{% markdown %}

# This will be rendered as markdown

- Bulleted

- List

{% endmarkdown %}I released that in datasette-render-markdown 2.2. I also wrote up a TIL on Custom Jinja template tags with attributes describing the pattern I used.

One bonus feature for that tutorial: I decided to drop in a nested table of contents, automatically derived from the HTML headers on the page.

I wrote the code for this entirely using the new ChatGPT Code Interpreter, which can write Python based on your description and, crucially, execute it and see if it works.

Here's my ChatGPT transcript showing how I built the feature.

I've been using ChatGPT Code Interpreter for a few months now, and I'm completely hooked: I think it's the most interesting thing in the whole AI space at the moment.

I participated in a Code Interpreter Latent Space episode to talk about it, which ended up drawing 17,000 listeners on Twitter Spaces and is now also available as a podcast episode, neatly edited together by swyx.

Symbex --check and --rexec

Symbex is my Python CLI tool for quickly finding Python functions and classes and outputting either the full code or just the signature of the matching symbol. I first wrote about that here.

symbex 1.1 adds two new features.

symbex --function --undocumented --checkThis new --check mode is designed to run in Continuous Integration environments. If it finds any symbols matching the filters (in this case functions that are missing their docstring) it returns a non-zero exit code, which will fail the CI step.

It's an imitation of black . --check - the idea is that Symbex can now be used to enforce code quality issues like docstrings and the presence of type annotations.

The other new feature is --rexec. This is an extension of the existing --replace feature, which lets you find a symbol in your code and replace its body with new code.

--rexec takes a shell expression. The body of the matching symbol will be piped into that command, and its output will be used as the replacement.

Which means you can do things like this:

symbex my_function \

--rexec "llm --system 'add type hints and a docstring'"This will find def my_function() and its body, pass that through llm (using the gpt-3.5-turbo default model, but you can specify -m gpt-4 or any other model to use something else), and then take the output and update the file in-place with the new implementation.

As a demo, I ran it against this:

def my_function(a, b):

return a + b + 3And got back:

def my_function(a: int, b: int) -> int:

"""

Returns the sum of two integers (a and b) plus 3.

Parameters:

a (int): The first integer.

b (int): The second integer.

Returns:

int: The sum of a and b plus 3.

"""

return a + b + 3Obviously this is fraught with danger, and you should only run this against code that has already been committed to Git and hence can be easily recovered... but it's a really fun trick!

ttok --encode --decode

ttok is my CLI tool for counting tokens, as used by LLM models such as GPT-4. ttok 0.2 adds a requested feature to help make tokens easier to understand, best illustrated by this demo:

ttok Hello world

# Outputs 2 - the number of tokens

ttok Hello world --encode

# Outputs 9906 1917 - the encoded tokens

ttok 9906 1917 --decode

# Outputs Hello world - decoding the tokens back again

ttok Hello world --encode --tokens

# Outputs [b'Hello', b' world']Being able to easily see the encoded tokens including whitespace (the b' world' part) is particularly useful for understanding how the tokens all fit together.

I wrote more about GPT tokenization in understanding GPT tokenizers.

TIL this week

Using tree-sitter with Python - 2023-07-14

Auto-formatting YAML files with yamlfmt - 2023-07-13

Quickly testing code in a different Python version using pyenv - 2023-07-10

Using git-filter-repo to set commit dates to author dates - 2023-07-10

Using OpenAI functions and their Python library for data extraction - 2023-07-10

Python packages with pyproject.toml and nothing else - 2023-07-08

Syntax highlighted code examples in Datasette - 2023-07-02

Custom Jinja template tags with attributes - 2023-07-02

Local wildcard DNS on macOS with dnsmasq - 2023-06-30

A Discord bot to expand issue links to a private GitHub repository - 2023-06-30

Bulk editing status in GitHub Projects - 2023-06-29

CLI tools hidden in the Python standard library - 2023-06-29

Releases this week

symbex 1.1 - 2023-07-16

Find the Python code for specified symbolsllm-mpt30b 0.1 - 2023-07-12

LLM plugin adding support for the MPT-30B language modelllm-markov 0.1 - 2023-07-12

Plugin for LLM adding a Markov chain generating modelllm-gpt4all 0.1 - 2023-07-12

Plugin for LLM adding support for the GPT4All collection of modelsllm-palm 0.1 - 2023-07-12

Plugin for LLM adding support for Google's PaLM 2 modelllm 0.5 - 2023-07-12

Access large language models from the command-linettok 0.2 - 2023-07-10

Count and truncate text based on tokensstrip-tags 0.5.1 - 2023-07-09

CLI tool for stripping tags from HTMLpocket-to-sqlite 0.2.3 - 2023-07-09

Create a SQLite database containing data from your Pocket accountdatasette-render-markdown 2.2 - 2023-07-02

Datasette plugin for rendering Markdownasgi-proxy-lib 0.1a0 - 2023-07-01

An ASGI function for proxying to a backend over HTTPdatasette-upload-csvs 0.8.3 - 2023-06-28

Datasette plugin for uploading CSV files and converting them to database tables

Link 2023-07-12 claude.ai: Anthropic's new Claude 2 model is available to use online, and it has a 100k token context window and the ability to upload files to it - I tried uploading a text file with 34,000 tokens in it (according to my ttok CLI tool, counting using the GPT-3.5 tokenizer) and it gave me a workable summary.

Link 2023-07-12 What AI can do with a toolbox... Getting started with Code Interpreter: Ethan Mollick has been doing some very creative explorations of ChatGPT Code Interpreter over the past few months, and has tied a lot of them together into this useful introductory tutorial.

TIL 2023-07-13 Auto-formatting YAML files with yamlfmt:

I decided to see if there was an equivalent of Black or Prettier for YAML files. I found yamlfmt from Google. …

Quote 2023-07-13

Not every conversation I had at Anthropic revolved around existential risk. But dread was a dominant theme. At times, I felt like a food writer who was assigned to cover a trendy new restaurant, only to discover that the kitchen staff wanted to talk about nothing but food poisoning.

TIL 2023-07-14 Using tree-sitter with Python:

tree-sitter is a "parser generator tool and an incremental parsing library". It has a very good reputation these days. …

Quote 2023-07-16

Increasingly powerful AI systems are being released at an increasingly rapid pace. [...] And yet not a single AI lab seems to have provided any user documentation. Instead, the only user guides out there appear to be Twitter influencer threads. Documentation-by-rumor is a weird choice for organizations claiming to be concerned about proper use of their technologies, but here we are.